Copilot Researcher agent, a deep research agent that is part of M365 Copilot has just received a major update that makes it the first of its kind multi modal researcher raising the bar for accuracy, depth and confidence with its multi-modal approach and “Critique and Council” capabilities. This update is part of the the Wave 3 update of Microsoft 365 Copilot – an effort to pair AI intelligence with enterprise trust, moving Copilot from a tool workers try to one they rely on. In short:

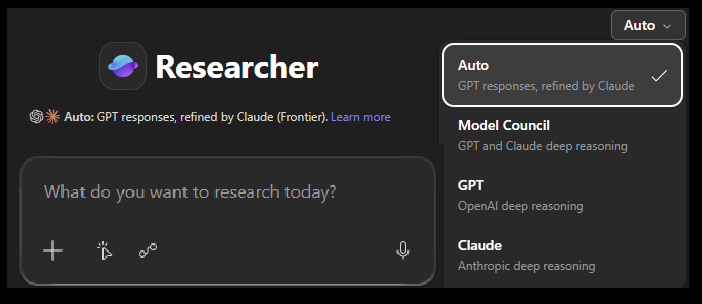

Council = Here – multiple models write independently, a third model compares them

Critique = In this mode, One model writes, another model reviews.

Critique (Default Mode)

Critique is the new default mode in Researcher and is designed for deep and complex research tasks, separates research generation from evaluation by utilising multiple AI models from both OpenAI and Anthropic.

Critique works by using one model to plan, do the research and create the initial report and then a second model to review, critique and score the report as an expert reviewer before the final report is created. It checks facts, analyses the findings and then produces the final report.

This approach (two researchers working together) delivers a huge increase in research quality and output by combining the roles of research writer and reviewer into a single deep research agent. It is the first agent that works in this way.

GPT drafts, Claude reviews for accuracy, completeness, and citation integrity before it’s delivered | Jared Spataro | Microsoft

Critique is now the default experience in Researcher, when Auto mode is selected in the model picker by the user.

This approach simulates the same review process used in leading academic and professional research institutions and is built around the rubric‑based evaluation. This is a structured review process that is focussed on strengthening the report without acting as an author itself. The reviewer process is all about examining the draft report from several different approach and then generates an enhanced report which the first then uses to refine the report. It focusses on:

- Source / reference assessments which ensures that the use of reputable, authoritative, and domain‑appropriate sources, has been used and that all references and sources used are verifiable against other sources.

- Completeness Scoring evaluates the report to make sure it satisfies the intent (ask) of the research brief given to it.

- Provides Strict Evidence Grounding Enforcement, which means it checks every claim and source to ensure everything in the report is anchored to the trusted sources verified above and that precise, research paper worthy citations are provided. This is all about ensuring trust and validity.

- Provides the final, improved report, based on it’s analysis of the above, working with the first model to tweak and improve the report.

"We evaluated Critique on the DRACO benchmark (Deep Research Accuracy, Completeness, and Objectivity)—100 complex deep research tasks across 10 domains, introduced by researchers from Perplexity and academia in February 2026.... We see the largest improvement in Breadth and Depth of Analysis (+3.33), followed by Presentation Quality (+3.04) and Factual Accuracy (+2.58). All dimensions show statistically significant improvements (paired t-test, p < 0.0001). Overall the score increased by 9.5 points" | Microsoft Official Blog

Council Mode

Council uses a different approach and brings multiple model responses (OpenAI and Anthropic) together side-by-side in the Researcher Agent experience as well as providing an analysis review which delivers insights on where the response for each models agree with each others, where they differ, and also highlights other key and unique insights each model brings to the task.

Here the Researcher runs your research question through multiple deep‑reasoning models in parallel (currently GPT and Claude).

The best way to think of this is like two experts independently writing full reports, then an assessor expert compares them and tells you where they align or diverge.

At the end, you get a summary of the output generated by both and an analysis of where they agree, disagree and differ. Here, Researcher:

- Runs your research question through multiple deep‑reasoning models in parallel (e.g., GPT + Claude).

- Each model produces a full, standalone report.

- A third AI then – compares the reports, highlights agreements, highlights disagreements, surfaces unique insights and provides a short “cover letter” summarising the differences.

You are then able to look at each report created by each model by clicking on either the Claude report of the GPT report.

Council or Critique Mode?

Use Critique (default mode):

- You need a single, trustworthy, publication‑ready report.

- You’re doing competitive analysis, regulatory summaries, or anything that needs rigour and citation quality.

- You want the system to catch hallucinations, weak sources, or structural issues.

Use Council when:

- You want to understand where models disagree.

- You’re exploring ambiguous, emerging, or multi‑disciplinary topics.

- You want to compare reasoning styles (e.g., GPT vs Claude).

- You’re making a decision and want multiple viewpoints before choosing a direction.

- You want to to compare facts and research approach from different models.

🤔 Let me know your comments, experience and views on these changes in the comments…

Leave a Reply