All Blog Posts

Select a Category to filter your search or scroll and find what you’d like to read…

- Adoption and Change Management (35)

- Agents (8)

- Anthropic (6)

- Anthropic (7)

- Apple (1)

- Asus (1)

- Automation (10)

- AWS (1)

- Business (132)

- Cisco (33)

- Cisco Webex (2)

- Cloud and Data Center (55)

- CloudPC (9)

- Collaboration and Productivity (298)

- Copilot (106)

- Copilot+ PC (16)

- Creativity (13)

- Customer Experience (19)

- Data and AI (96)

- Data Protection (6)

- Dell (1)

- Devices (33)

- Education (5)

- End User Compute (82)

- Enterprise Infrastructure (19)

- Finance (1)

- Geeky Stuff (16)

- Google (1)

- Google (4)

- HPE (1)

- Intel (2)

- Juniper (1)

- Low Code (2)

- Microsoft (207)

- Microsoft Surface (16)

- Microsoft Teams (6)

- Nvidia (1)

- Observability (2)

- OpenAI (13)

- OpenAI (11)

- Palo Alto (1)

- Qualcomm (4)

- Quantum Computing (1)

- Regulatory Compliance (3)

- Security and Compliance (95)

- SharePoint (1)

- Spunk (4)

- Uncategorised (2)

- Vendor (1)

- Windows 10 (49)

- Windows 11 (99)

- WindowsInsider (24)

-

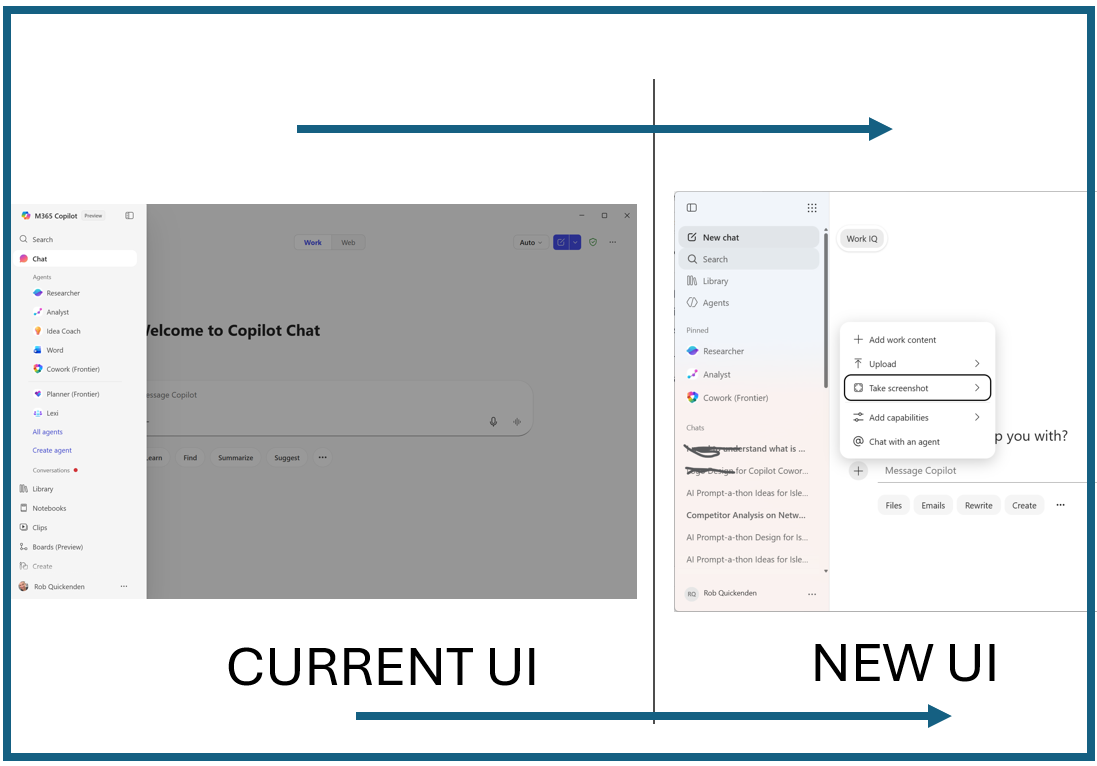

The new Microsoft 365 Copilot Experience

Read more: The new Microsoft 365 Copilot ExperienceMicrosoft has begun rolling out a redesigned Copilot experience across Microsoft 365, introducing a more consistent interface, clearer grounding signals, and a sharper separation between Copilot Chat and Copilot for…

-

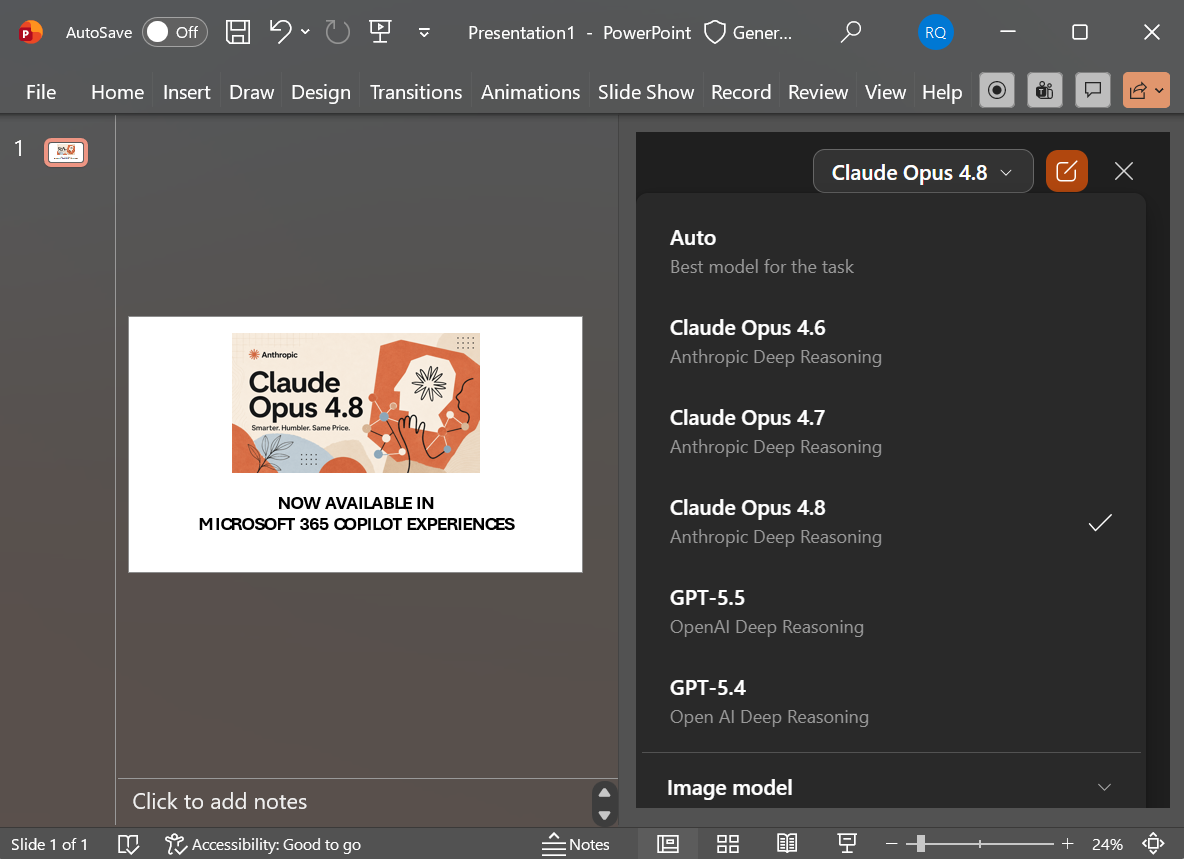

Claude Opus 4.8 now Available in Microsoft 365 Copilot

Read more: Claude Opus 4.8 now Available in Microsoft 365 CopilotOn day one of it’s release, Microsoft has now rolled out support for Claude Opus 4.8 across Microsoft 365 Copilot for most commercial customers. This latest version of Opus is…

-

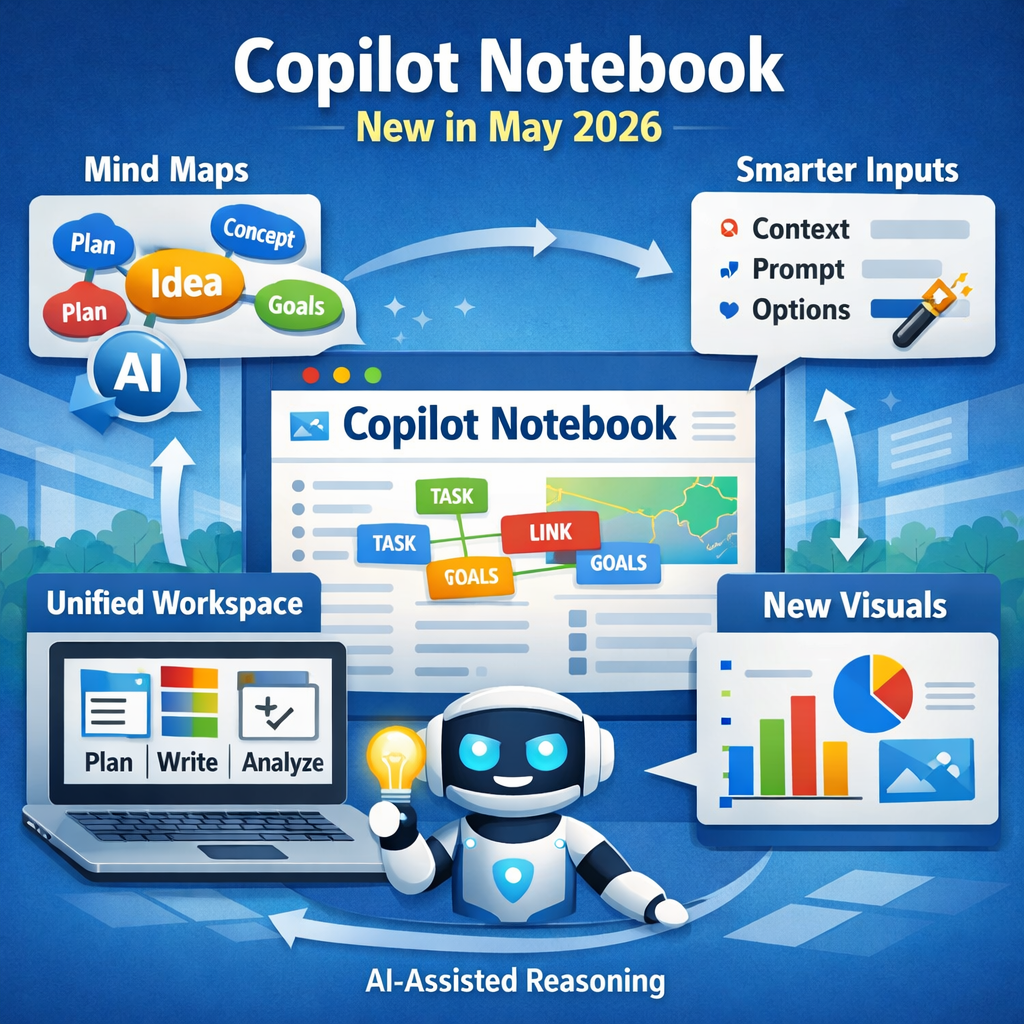

Big Copilot Notebooks Update brings new Visuals, Smarter Inputs, and a new Unified Workspace

Read more: Big Copilot Notebooks Update brings new Visuals, Smarter Inputs, and a new Unified WorkspaceMicrosoft has released another significant set of enhancements to Microsoft 365 Copilot Notebooks, continuing the rapid evolution of what is becoming one of the most capable reasoning and planning tools…

-

Surface Pro 12 and Laptop 8 Business – what you need to know

Read more: Surface Pro 12 and Laptop 8 Business – what you need to knowMicrosoft has unveiled the Surface Pro 12 and Surface Laptop 8. These are targeted at commercial / business users and whilst form factors stick with the current design, they pack…

-

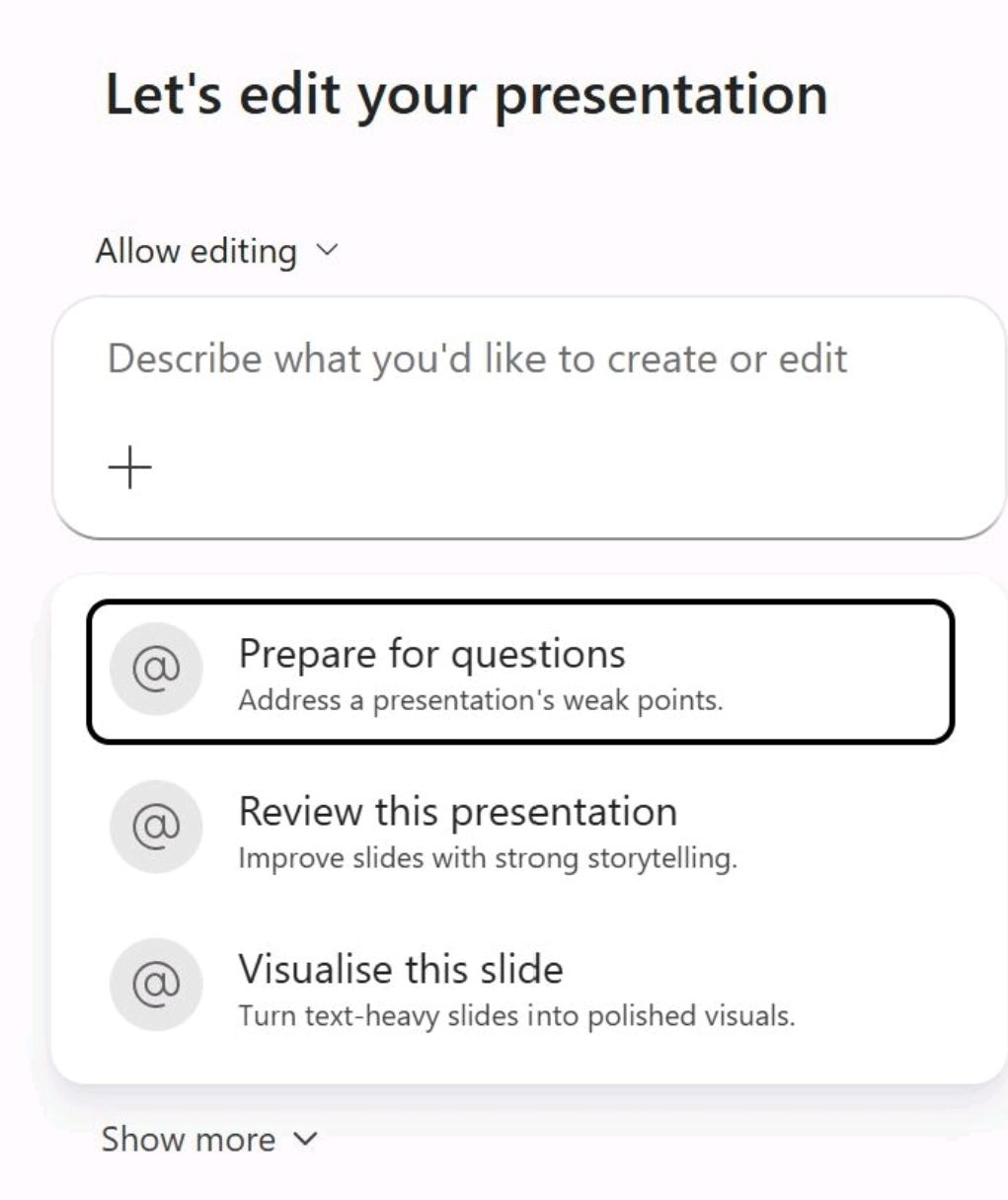

PowerPoint can help you Prep for your Presso

Read more: PowerPoint can help you Prep for your PressoMicrosoft is introducing a new AI‑assisted editing experience in PowerPoint that amongst other things can help you Prep for your Presso. This is part of the “Edit with” experience as…

-

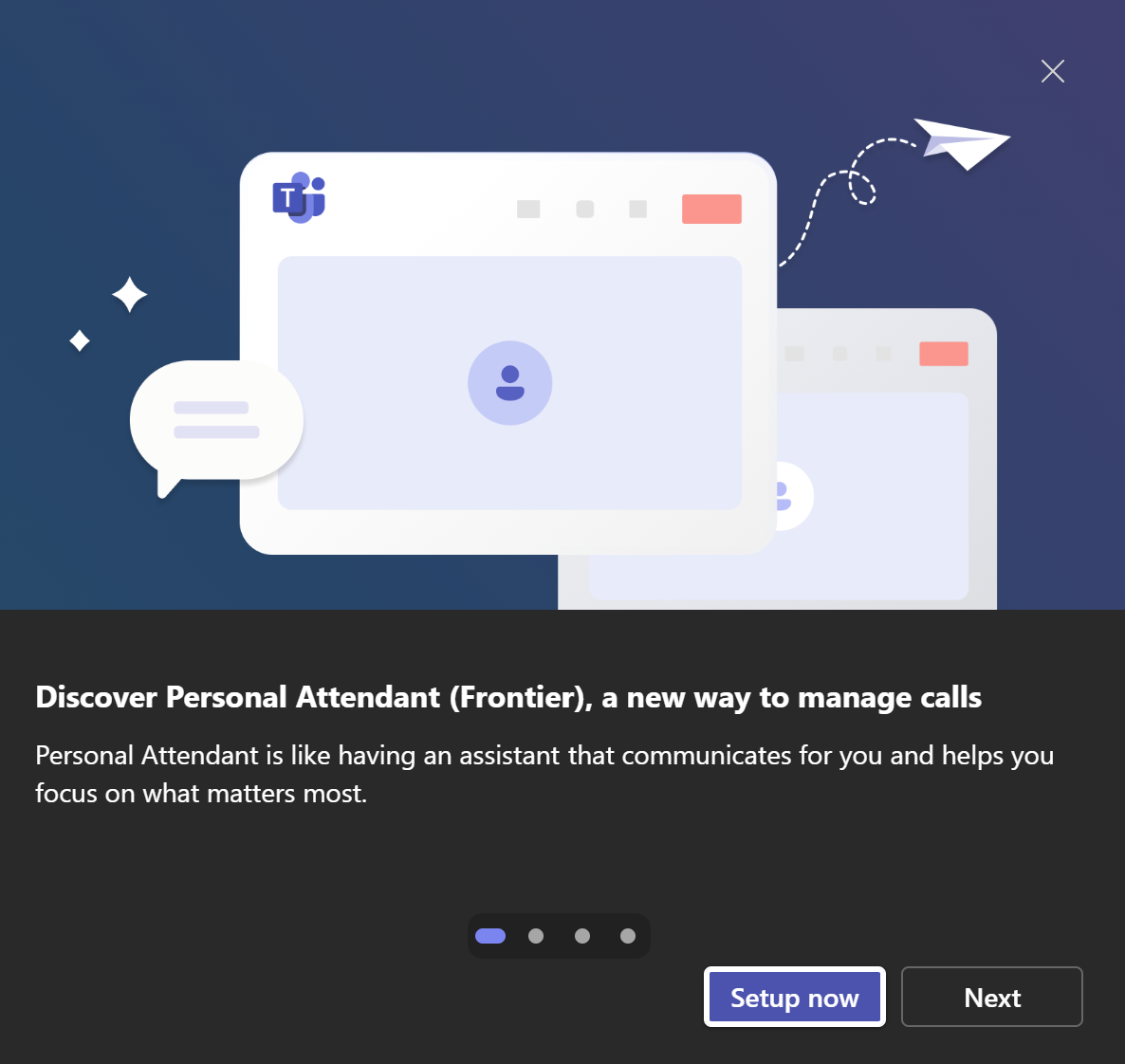

Copilot can now answer Teams Calls

Read more: Copilot can now answer Teams CallsYes, this new feature rolling out for Microsoft Teams Phone users let’s Microsoft Copilot answer, screen and manage your callers. If you want to skip the blog and go straight…

-

We Will Soon Be Managing Digitial Employees – The Next Evolution of AI Chatbots and Agents

Read more: We Will Soon Be Managing Digitial Employees – The Next Evolution of AI Chatbots and AgentsAI is moving through one of its biggest shifts yet – from simple chatbots that answer questions to intelligent agents that take action, and now to fully fledged digital employees…

-

Best Free AI Courses

Read more: Best Free AI CoursesArtificial intelligence skills are now essential across every industry, whether you’re building AI‑powered apps, deploying Copilot, strengthening cybersecurity, or exploring open‑source LLMs. There is lots of content out there and…

-

What is Windows 365 Flex

Read more: What is Windows 365 FlexWindows 365 Flex is Microsoft’s newly renamed and expanded evolution of Windows 365 Frontline, designed to give organisations a more adaptable, cost‑efficient way to deliver Cloud PCs to employees, contractors or any…

-

Claude Models now available in Copilot Chat

Read more: Claude Models now available in Copilot ChatAs part of Microsoft’s multi-modal and model choice approach, Claude’s Opus model are now rolling out in Microsoft 365 Copilot Chat starting with organisations enrolled in the frontier (preview) ring…

-

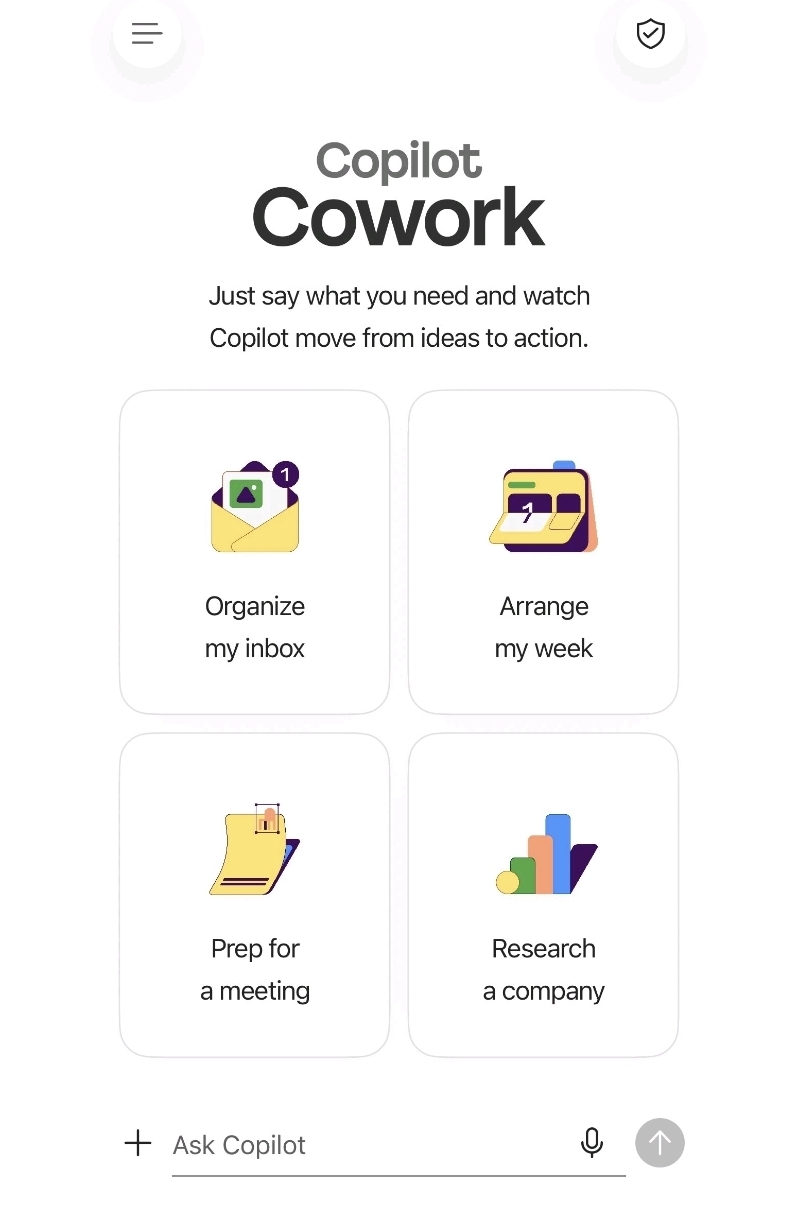

Copilot Cowork is now on mobile

Read more: Copilot Cowork is now on mobileOrganisations enrolled in Copilot Frontier (early adopter) and have Anthropic models enabled in your Tennant, then Cowork in the mobile M365 Copilot experience is now rolling out, meaning agentic AI…

-

Will the AI Bubble Burst?

Read more: Will the AI Bubble Burst?It doesn’t look like it – This week has been a bit week for AI (as usual). The general trend being AI companies telling us that “AI is no longer…

-

Edit with Copilot Explained

Read more: Edit with Copilot ExplainedIf you have been “less than impressed” with the reality of Copilot in Office apps, I urge you to reset expectation and take a fresh look– because “Edit with Copilot”…

-

Chat GPT-5.5 comes to Microsoft Copilot

Read more: Chat GPT-5.5 comes to Microsoft CopilotMicrosoft is rolling out the integration of GPT-5.5 into its Copilot ecosystem, enhancing reasoning and multi-step task execution across GitHub, Microsoft 365, and Azure Foundry. This latest model from OpenAI…

-

Claude Opus 4.7 available in Microsoft 365 Copilot

Read more: Claude Opus 4.7 available in Microsoft 365 CopilotMicrosoft has expanded model choice again – the same day Anthropic’s Claude Opus 4.7 was released. This is now available inside Microsoft 365 Copilot Cowork, bringing faster reasoning, sharper vision…

-

Windows Team revamp Windows Insider Program

Read more: Windows Team revamp Windows Insider ProgramMicrosoft is introducing new Experimental and Beta channels that separate risky builds from stable ones, while also giving testers a clearer path to try features before they ship. You can…

-

Video recaps in Teams explained

Read more: Video recaps in Teams explainedMicrosoft has rolled out the new Video Recap feature in Teams for Copilot licensed users. Initially in preview, this is really useful (well i have found it useful) for anyone…

-

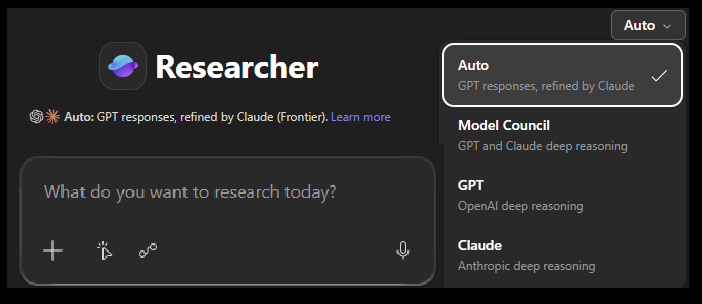

Microsoft Researcher: Critique vs Council explained.

Read more: Microsoft Researcher: Critique vs Council explained.Copilot Researcher agent, a deep research agent that is part of M365 Copilot has just received a major update that makes it the first of its kind multi modal researcher…

-

Cisco to acquire Galileo Tech

Read more: Cisco to acquire Galileo TechCisco has announced its intent to acquire Galileo Technologies, a fast‑growing AI‑observability startup founded in 2021 by alumni from Google, Uber AI, and Apple. The deal is expected to close…

-

Copilot Cowork Custom Skills

Read more: Copilot Cowork Custom SkillsOne of things I really really like about Cowork is the ability to create custom skills. These allow you to tweak the way the model work, enabling you customise how…

-

Copilot Cowork Walkthrough Guide

Read more: Copilot Cowork Walkthrough GuideHaving got back from MVP Summit (aka the NDA event) at the end of March and seeing the work Microsoft have been doing around the Anthropic powered Copilot Cowork experience…

-

Copilot Cowork now in Frontier preview

Read more: Copilot Cowork now in Frontier previewMicrosoft has quietly launched Copilot Cowork into the Frontier programme. Yes… Microsoft has quietly launched Copilot Cowork into the Frontier (preview) programme, opening early access their new agentic side of…

-

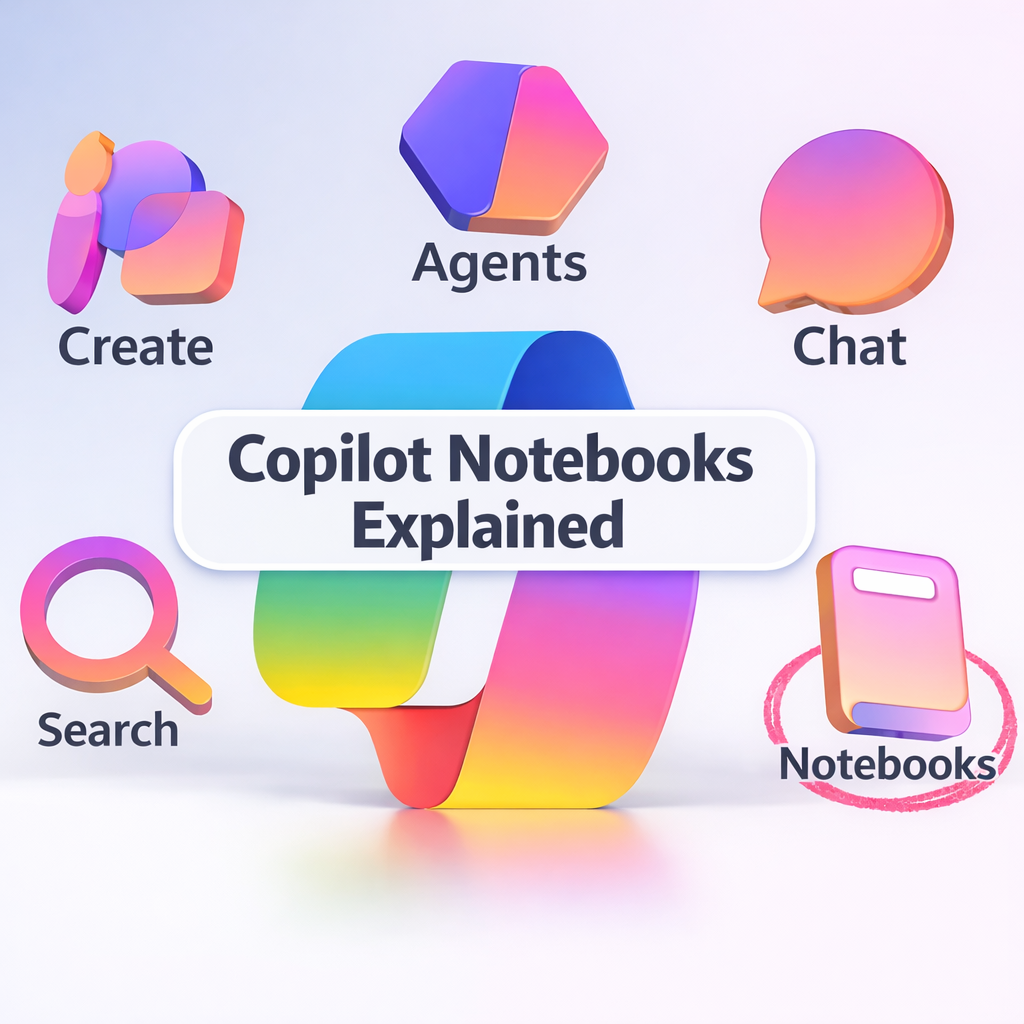

Microsoft Copilot Notebooks Explained

Read more: Microsoft Copilot Notebooks ExplainedCopilot Notebooks are persistent, AI‑grounded project workspaces that gather files, chats, Copilot pages and notes so Copilot can reason across them. They are made up of one or more Copilot…

-

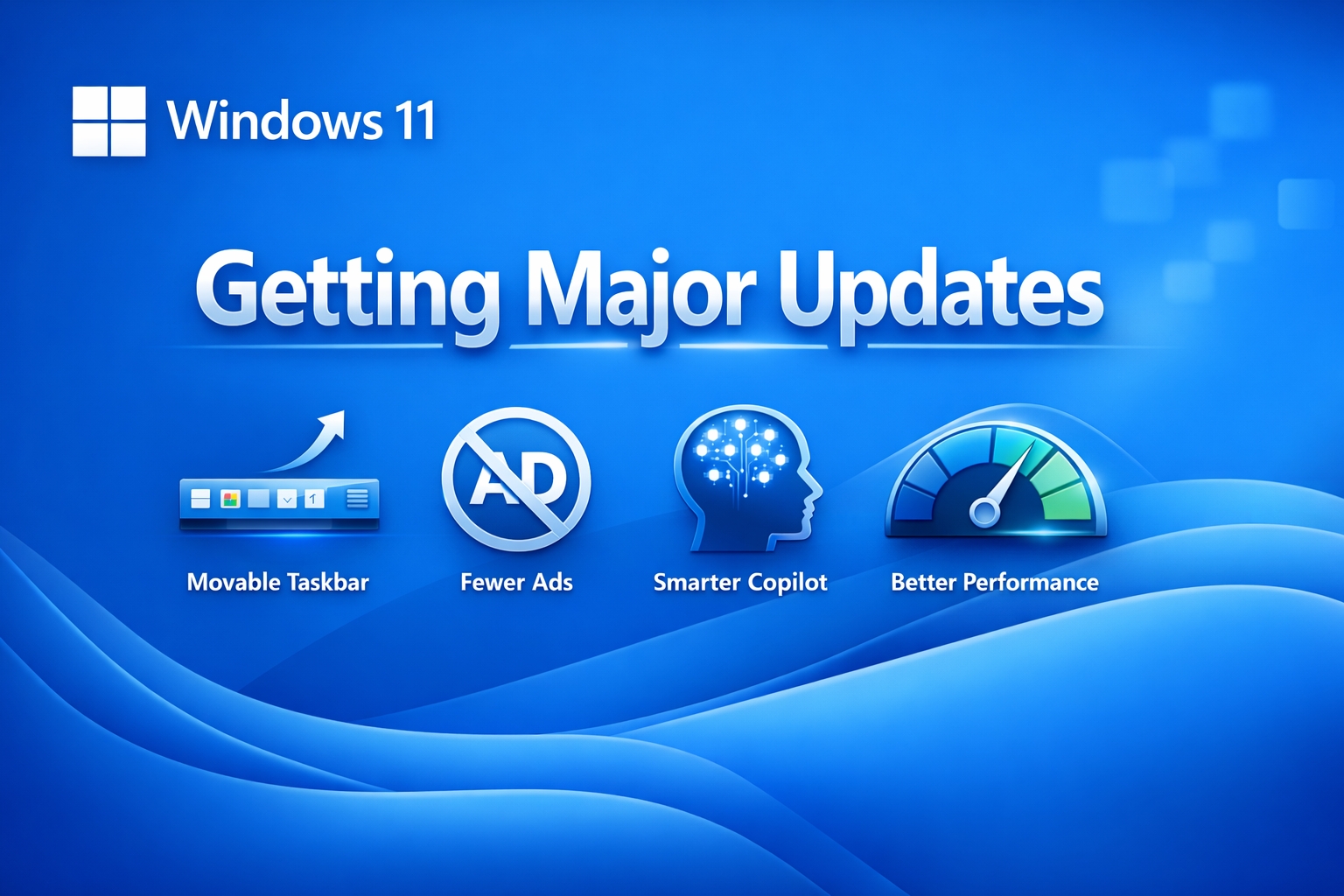

Windows 11 Getting Major Quality Updates including Movable Taskbar

Read more: Windows 11 Getting Major Quality Updates including Movable TaskbarMicrosoft is rolling out major Windows 11 improvements in 2026, including a movable taskbar, fewer ads, a lighter Copilot experience, and faster performance.

-

Cisco expands Secure AI Factory with NVIDIA

Read more: Cisco expands Secure AI Factory with NVIDIACisco has announced a major expansion of its Secure AI Factory with NVIDIA, enabling large enterprises to move AI projects from pilot to production in weeks instead of months. The…

-

Is Microsoft about to sue OpenAI over a $50bn deal with AWS?

Read more: Is Microsoft about to sue OpenAI over a $50bn deal with AWS?Microsoft is apparently weighing up legal action against OpenAI and Amazon over a reported $50 billion AWS–OpenAI Frontier deal…

-

What the Microsoft Copilot Leadership Reorg means.

Read more: What the Microsoft Copilot Leadership Reorg means.Microsoft are re shuffling their AI leadership and blending consumer (person home, family) and commercial (Microsoft 365) Copilot into a single business unit with unified strategy. Mustafa Suleyman remains at…

-

What we can learn from the Stryker Cyber Incident

Read more: What we can learn from the Stryker Cyber IncidentThe recent cybersecurity incident disclosed by Stryker on March 11, 2026, which caused global disruption to an aspect of their Microsoft environment. In their disclosure, Striker said it activated their…

-

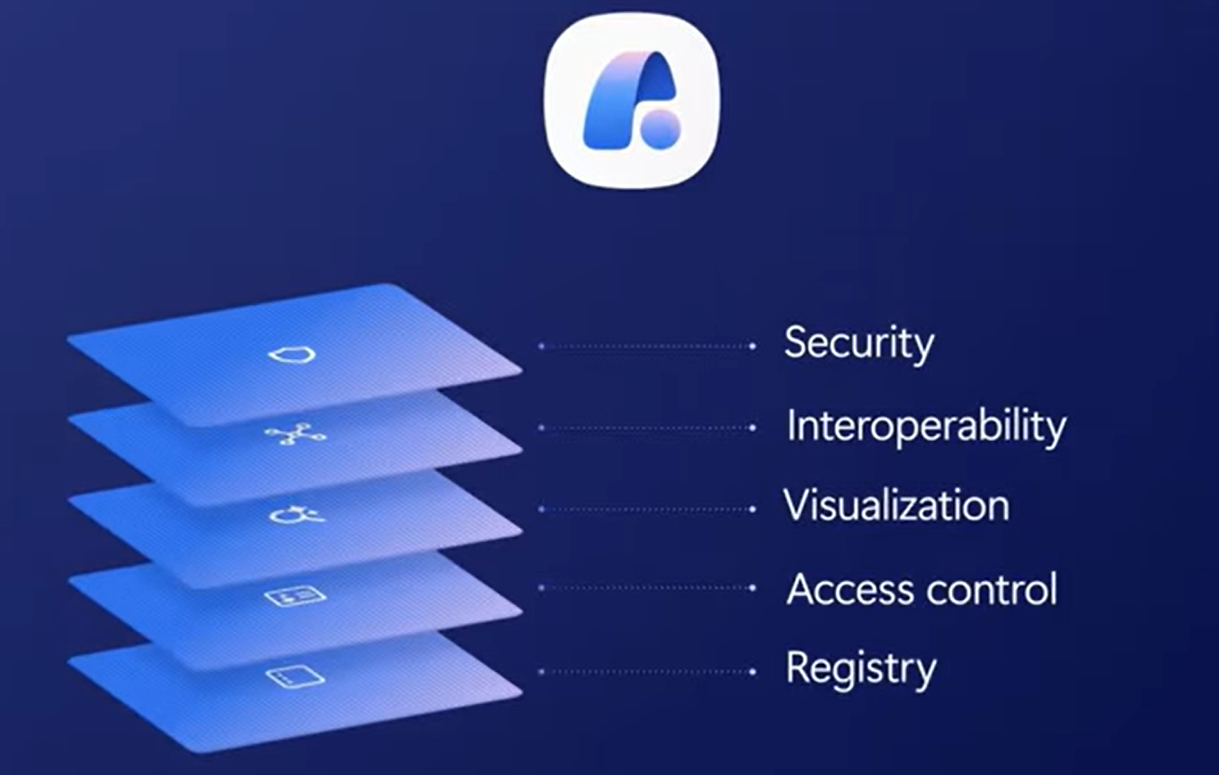

Agent 365 Explained – The Identity and Governance Layer for AI Agents

Read more: Agent 365 Explained – The Identity and Governance Layer for AI AgentsWhen Microsoft first unveiled Agent 365 at Ignite in November 2025, it felt like the beginning of the next phase of agent readiness – the moment where “agents” stopped being…

-

Microsoft 365 Copilot: Wave 3

Read more: Microsoft 365 Copilot: Wave 3Microsoft 365 Copilot Wave 3 is the latest “version/release update” of Microsoft 365 Copilot, which introduces agentic AI capabilities through Copilot Cowork to execute multi-step tasks across Microsoft 365 apps.…

-

Cisco Desk Pro G2 now Teams Certified

Read more: Cisco Desk Pro G2 now Teams CertifiedCisco’s new Desk Pro G2 desk device which was annouced at ISE and Cisco Live this year is now certified for Teams Rooms The Desk Pro G2 is a 27″…

-

Microsoft 365 E7 Explained – The new “AI Frontier Suite”

Read more: Microsoft 365 E7 Explained – The new “AI Frontier Suite”Today marks one of the biggest shifts in Microsoft’s productivity and security stack since the launch of Microsoft 365 E5 back in 2015. Microsoft has officially unveiled Microsoft 365 E7:…

-

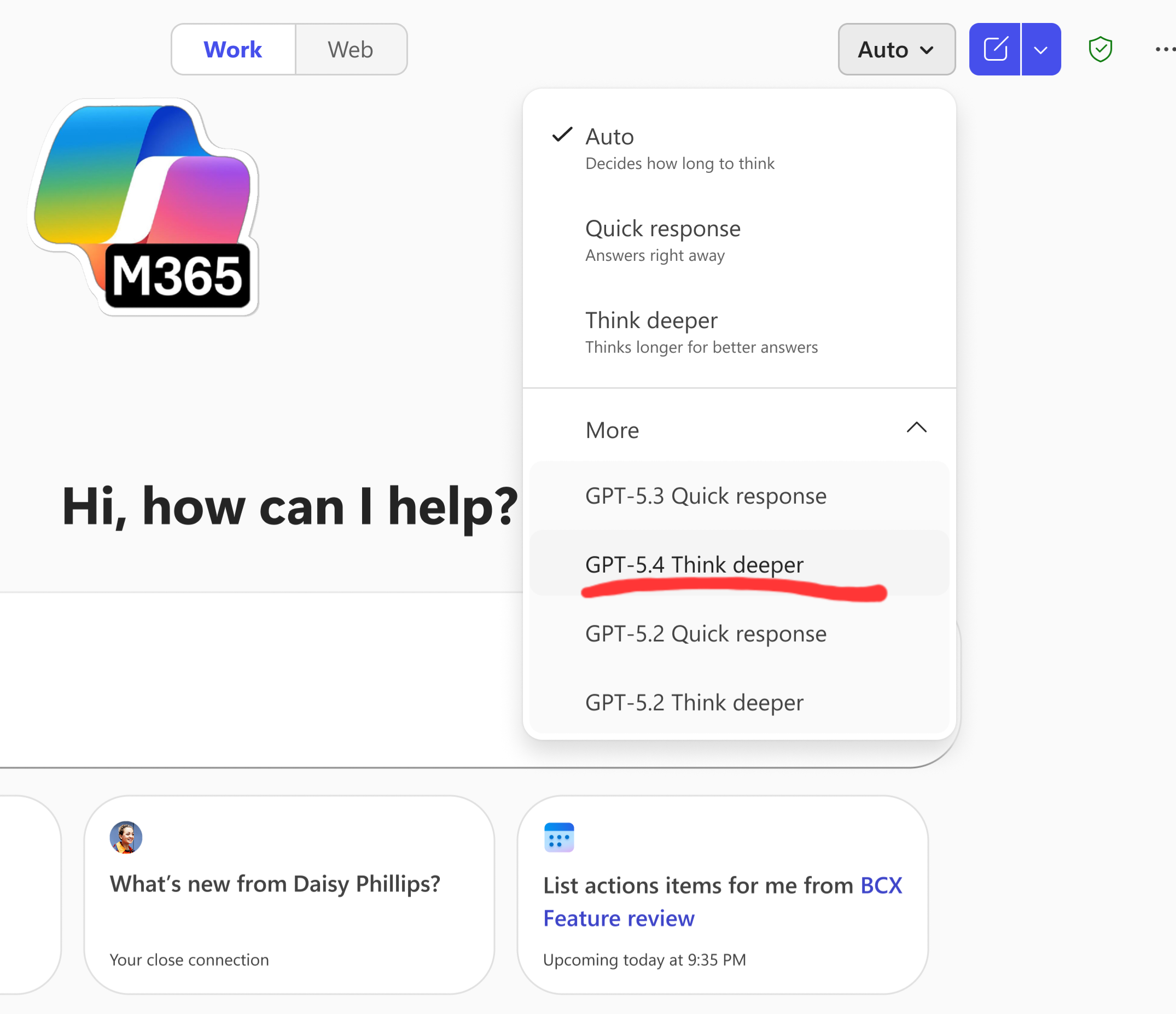

GPT‑5.4 Thinking rolling out in Microsoft 365 Copilot

Read more: GPT‑5.4 Thinking rolling out in Microsoft 365 CopilotHot on the heels of GPT‑5.3 released this week, which I covered in my last blog – Microsoft is now rolling out support for GPT‑5.4 Thinking to Copilot Studio early…

-

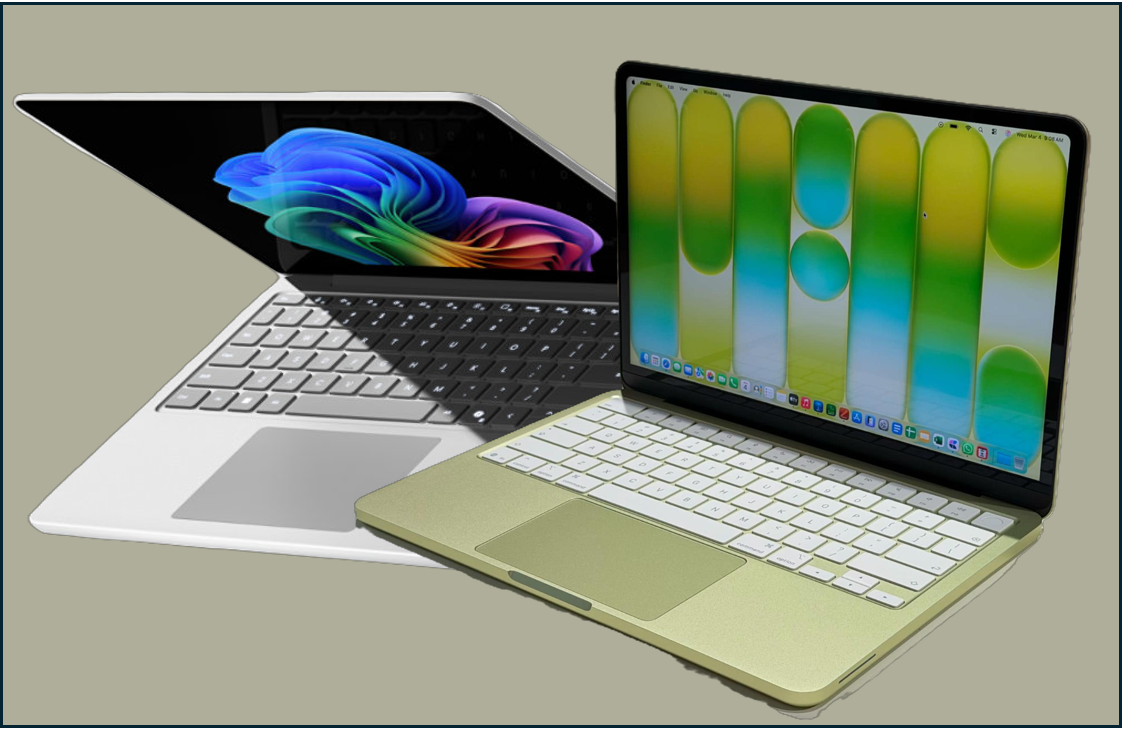

Apple Raised the Stakes – How Microsoft Should Respond with a new Surface Go

Read more: Apple Raised the Stakes – How Microsoft Should Respond with a new Surface GoApple has just reset the entry-level laptop conversation by announcing premium looking MacBook which starts at just $599 (£599 UK). Whilst not a comparison in terms of specs, it will…

-

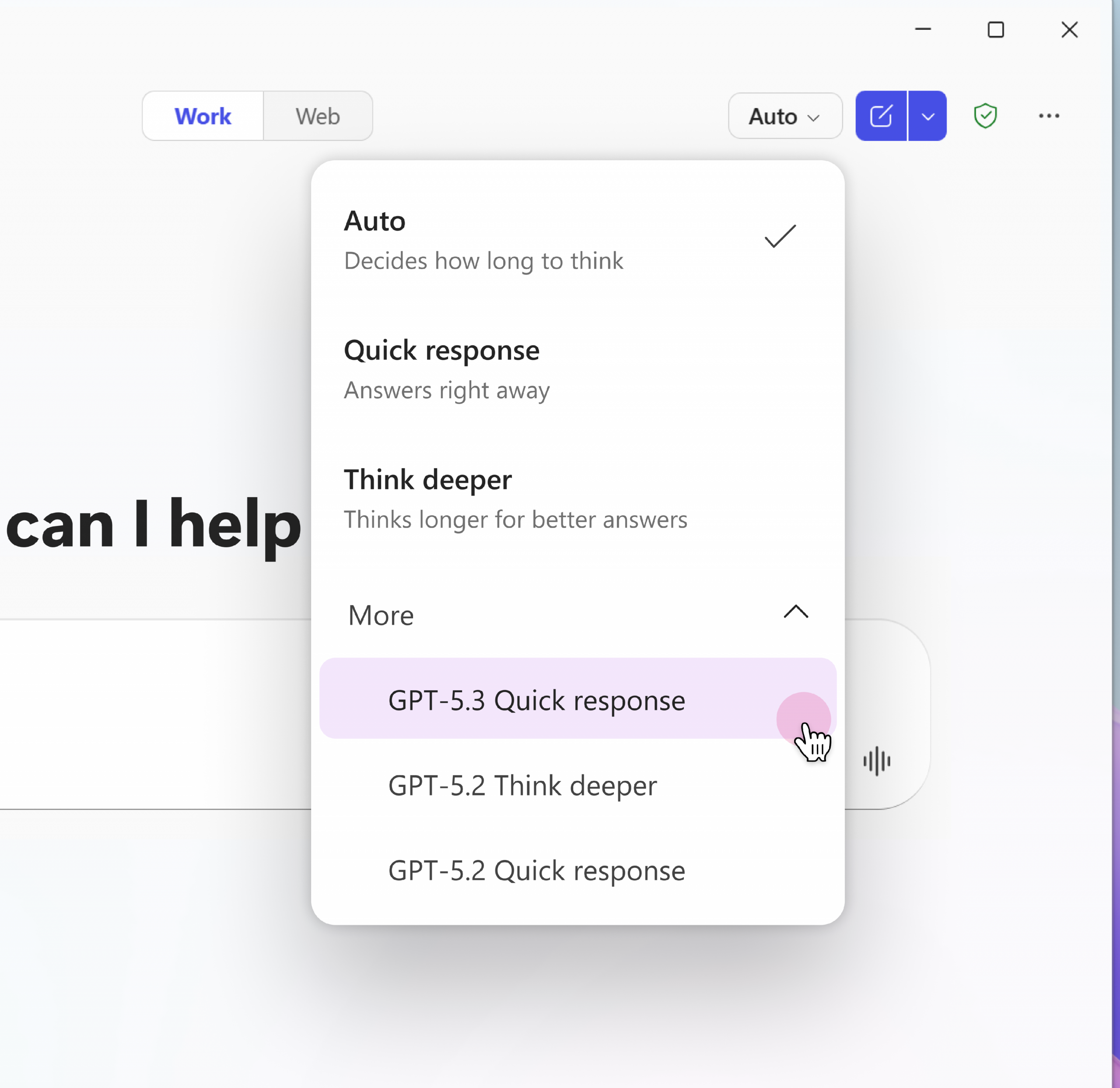

Microsoft Rolling Out GPT‑5.3 Instant to Microsoft 365 Copilot Users

Read more: Microsoft Rolling Out GPT‑5.3 Instant to Microsoft 365 Copilot UsersMicrosoft has started rolling out OpenAI’s GPT‑5.3 Instant across Microsoft 365 Copilot and Copilot Studio, bringing a noticeable upgrade in speed, clarity, and task‑aligned responses. This update is now live…

-

Why Context is the Most Valuable Layer in Enterprise AI

Read more: Why Context is the Most Valuable Layer in Enterprise AIIf you’ve been following Microsoft’s AI story over the last few months and especially if you have attended any of Microsoft’s city hopping AI Tours, you’ll have noticed a subtle…

-

Windows 365 Devices: ASUS, Dell, and Microsoft Expand the Cloud PC Ecosystem

Read more: Windows 365 Devices: ASUS, Dell, and Microsoft Expand the Cloud PC EcosystemMicrosoft’s Cloud PC vision took another confident step forward this week with the introduction of two new Windows 365‑powered mini PCs from ASUS and Dell – compact, secure, cloud‑first devices…

-

Ways to Build Agents: Nube to Pro

Read more: Ways to Build Agents: Nube to ProWhilst at Microsoft’s AI Tour this week, I was asked my many people, the difference between building agents in AI Builder (in the Copilot App), Copilot Studio and Azure AI…

-

What are Microsoft 365’s New AI Watermarks?

Read more: What are Microsoft 365’s New AI Watermarks?Microsoft is soon rolling out a new (optional) AI watermarking policy across Microsoft 365 – and while the headlines make it sound dramatic, the real story is more nuanced. The…

-

Are you leaking company data to non-sanctioned AI Apps?

Read more: Are you leaking company data to non-sanctioned AI Apps?We repeatedly hear stories around personal and corporate data being leaked on the web through the un-sanctioned use of consumer grade AI tools like Grok, ChatGPT and others. A report…

-

Microsoft AI Tour London

Read more: Microsoft AI Tour LondonMorning all. I’ll be at the AI Tour in London today talking and showcasing two of my favourite Microsoft technology areas. Dropby the device booth to say hello, the Copilot…

-

AI Prompting Guide: Get Better Results with Copilot and ChatGPT

Read more: AI Prompting Guide: Get Better Results with Copilot and ChatGPTGenerative AI is now woven into the way we work both at work and home. Whether you’re drafting content, analysing data, or simply trying to get unstuck, tools like Microsoft…

-

Microsoft Expands Copilot Connectors: What the New Integrations Mean for Microsoft 365 and Enterprise AI

Read more: Microsoft Expands Copilot Connectors: What the New Integrations Mean for Microsoft 365 and Enterprise AIMicrosoft has expanded the Copilot Connector catalog with 35 new connectors so Microsoft 365 Copilot can reach many more external systems and data sources directly, with new developer tooling and…

-

Is AI Forcing the Network conversation organisations have been avoiding?

Read more: Is AI Forcing the Network conversation organisations have been avoiding?Artificial intelligence is no longer just changing applications – it’s exposing the limits of enterprise network infrastructure. In their Q2 earnings report, Cisco’s leadership team described the current campus refresh…

-

What are Copilot connectors?

Read more: What are Copilot connectors?A Copilot connector is the plumbing that brings your organisation’s external content into Microsoft 365 so Copilot can ground answers in real, company-specific data. Practically speaking, a connector extracts or…

-

Cisco updates contract terms in Response to Market Volatility

Read more: Cisco updates contract terms in Response to Market VolatilityCisco’s recent update to partner contract terms, prompted by rising memory prices, has caught attention across the partner and customer community. Of course any change that vendors make that has…

-

Cisco Live Amsterdam 2026: AI‑Ready Networking to Reshape Enterprise Infrastructure

Read more: Cisco Live Amsterdam 2026: AI‑Ready Networking to Reshape Enterprise InfrastructureWhilst I wasn’t able to make it this year, Cisco Live EMEA in Amsterdam was full of annoucements and updates to their products sets. The message to customers and partners…

-

2026 Role‑Based training for Microsoft 365 Copilot users

Read more: 2026 Role‑Based training for Microsoft 365 Copilot usersI’m a huge believer in role‑based learning because it gives people practical, relevant ways to bring AI into the work they already do. Wherever you are on your Copilot journey…

-

How OpenAI and Anthropic Just Triggered the Next Big Shift in Software

Read more: How OpenAI and Anthropic Just Triggered the Next Big Shift in SoftwareThe last week has felt like a turning point in the AI landscape. Not because of a single product launch, but because two of the biggest players in the industry…

-

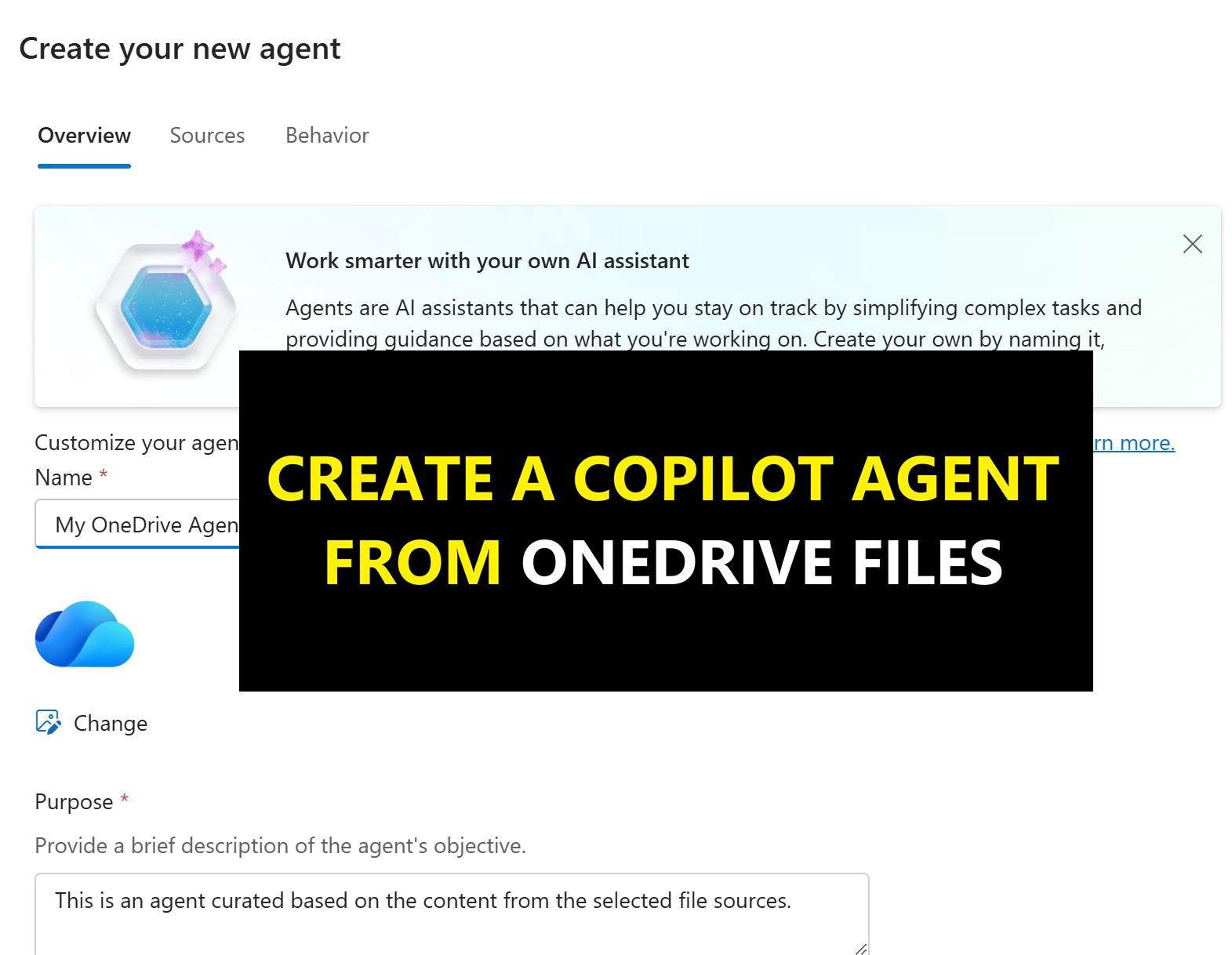

Create Agents in One-Click from your OneDrive

Read more: Create Agents in One-Click from your OneDriveMicrosoft has now made it possible to create grounded knowledge “agents” directly from OneDrive. If you’ve not seen this yet, it allows you to select up to 20 OneDrive for…

-

Microsoft Q2 FY26 Earnings: Cloud & AI Power Record Growth

Read more: Microsoft Q2 FY26 Earnings: Cloud & AI Power Record GrowthMicrosoft has opened 2026 with a landmark quarter that firmly positions them as the global leader in enterprise cloud and AI adoption. In the earning report, Microsoft has achieved milestones…

-

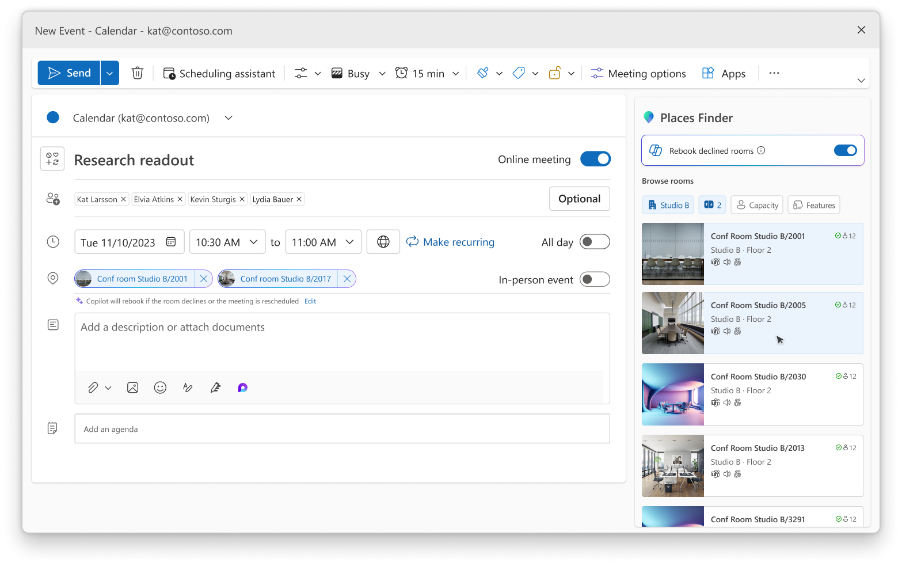

Microsoft Teams Licensing Updates: More Premium Features for Everyone

Read more: Microsoft Teams Licensing Updates: More Premium Features for EveryoneMicrosoft has announced some big changes to the Teams licensing model, aimed at making more advanced features available to everyone along with updates to their Places products set which is…

-

M365 Copilot Image Generation Levels Up, and Video Summaries are coming to Copilot Notebooks

Read more: M365 Copilot Image Generation Levels Up, and Video Summaries are coming to Copilot NotebooksMicrosoft has kicked off 2026 with two significant enhancements to Microsoft 365 Copilot – both providing a clear signal to how improvements in multi-media creation in AI will support creativity,…

-

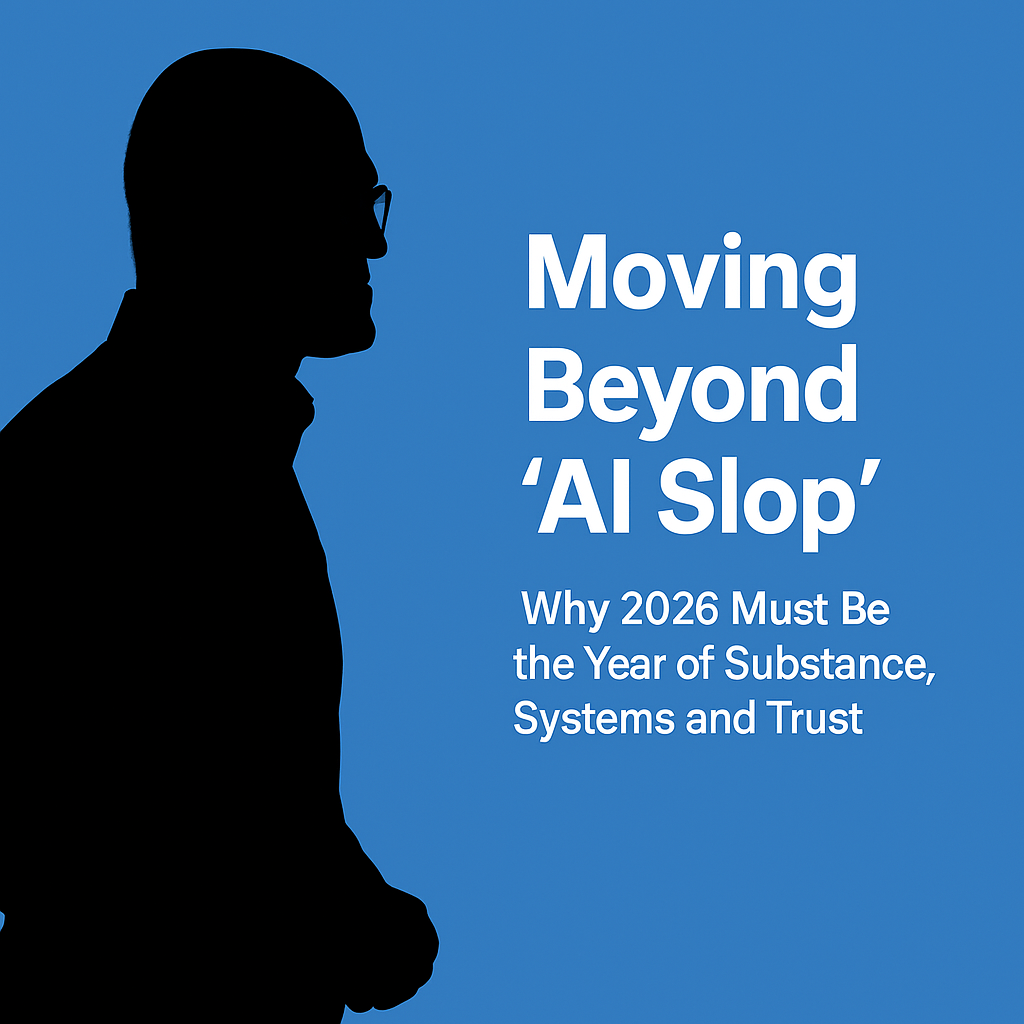

Satya Nadella’s Call for an “AI Reset” – What Business Leaders Must Prioritise in 2026

Read more: Satya Nadella’s Call for an “AI Reset” – What Business Leaders Must Prioritise in 2026Following Satya Nadella’s looking forward to 2026 post, this blog explores the detail behind it and why 2026 must move beyond AI slop and shift toward trusted, outcome‑driven AI projects…

-

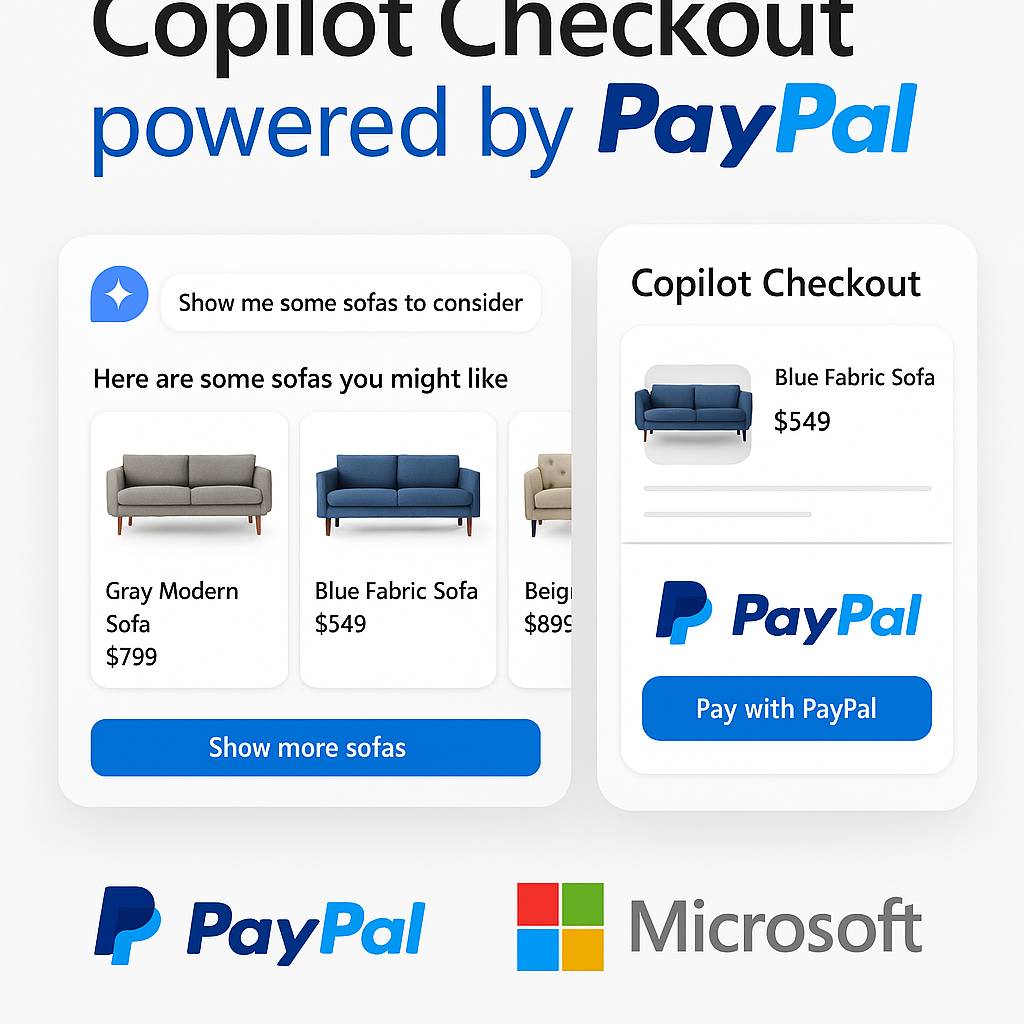

What is Copilot Checkout? Microsoft and PayPal’s new AI-Powered Commerce Experience.

Read more: What is Copilot Checkout? Microsoft and PayPal’s new AI-Powered Commerce Experience.Microsoft and PayPal have officially joined forces to launch Copilot Checkout, a groundbreaking integration that redefines the online shopping experience. This collaboration merges Microsoft’s conversational AI capabilities with PayPal’s trusted…

-

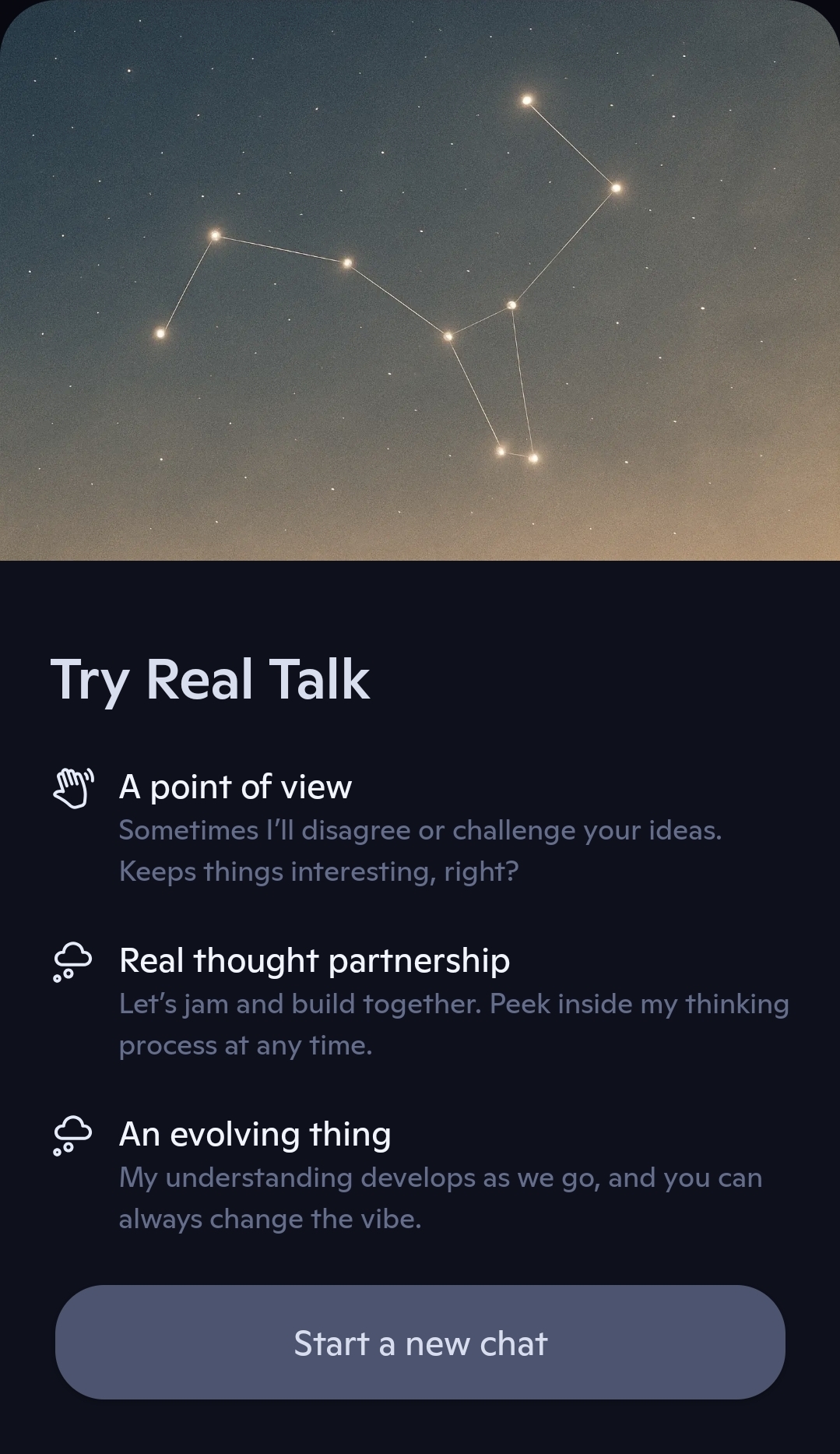

What is Copilot Real Talk Mode? And how to use it.

Read more: What is Copilot Real Talk Mode? And how to use it.Back at the “Fall update in October”, Microsoft announced that a new talk mode called “Real Talk” was coming to Copilot. This has been available in the US for a…

-

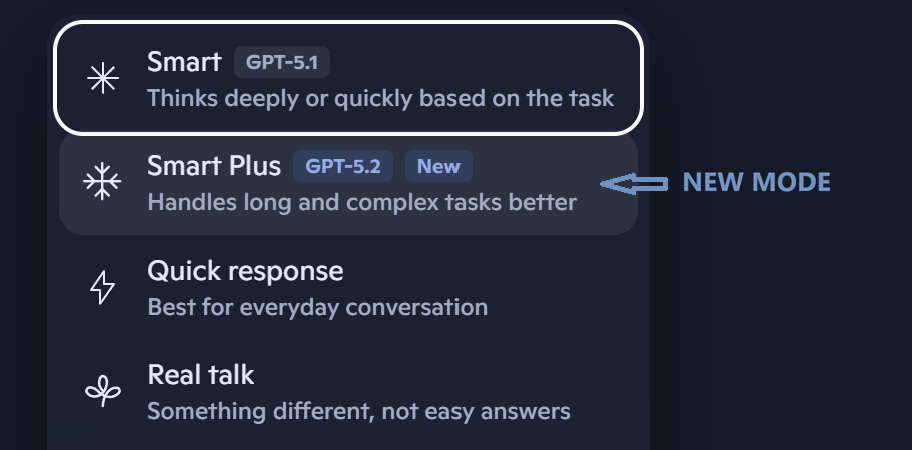

Copilot gets free GPT‑5.2 upgrade with “Smart Plus” mode

Read more: Copilot gets free GPT‑5.2 upgrade with “Smart Plus” modeMicrosoft has begun rolling out GPT‑5.2 across Copilot on the web, Windows, and mobile as a free upgrade. It sits alongside GPT‑5.1 rather than replacing it, giving users a clear…

-

Automatic Alt Text on Copilot+ PCs: A Small Feature with a Big Accessibility Impact

Read more: Automatic Alt Text on Copilot+ PCs: A Small Feature with a Big Accessibility ImpactMicrosoft has rolled out a useful update to the Office Suite apps like Word, Excel and PowerPoint which creates automatic, “on‑device” Alt Text generation for images. This is a great…

-

How to get free security updates for Windows 10

Read more: How to get free security updates for Windows 10If you are a home/consumer user using Windows 10 – because you are unwilling to, or unable to (due to hardware restrictions) to upgrade to Windows 11, and not able…

-

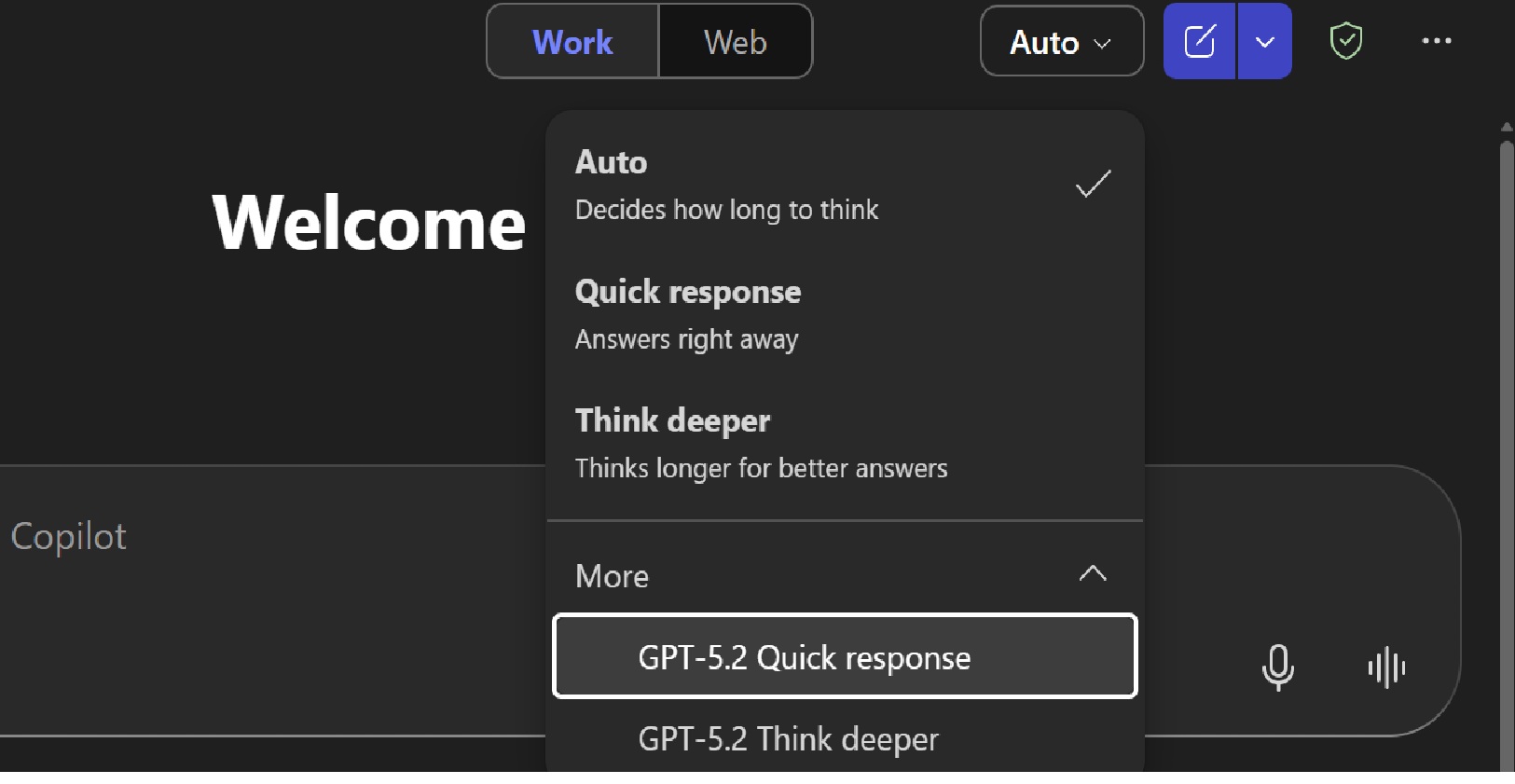

GPT-5.2 now available in Microsoft 365 Copilot

Read more: GPT-5.2 now available in Microsoft 365 CopilotMicrosoft has just (11th Dec) started rolling out OpenAI’s GPT‑5.2 across Microsoft 365 Copilot and Copilot Studio, marking another significant leap in AI-powered productivity. The differences between GPT5 and GPT5.2 provide “a…

-

When the Cloud Sneezes: a look at the ‘Outage Season’

Read more: When the Cloud Sneezes: a look at the ‘Outage Season’The past few months have been a bruising reminder that even the biggest cloud providers can stumble. AWS, Microsoft Azure, and Cloudflare have all suffered major outages, disrupting any services…

-

More Anthropic Models coming to Microsoft Copilot

Read more: More Anthropic Models coming to Microsoft CopilotMicrosoft is making a major change to how AI models are integrated into Copilot experiences. From 7 January 2026, Anthropic’s models will be enabled by default for Microsoft 365 Copilot…

-

Microsoft 365 Price Changes: Preparing for July 2026

Read more: Microsoft 365 Price Changes: Preparing for July 2026With over a 1,000 new features and updates across the Microsoft 365 stack in the last couple of years, Microsoft has confirmed that the commercial Microsoft 365 suite will undergo…

-

Microsoft Ignite 2025 – “The Agentic Shift”

Read more: Microsoft Ignite 2025 – “The Agentic Shift”Microsoft used Ignite 2025 to tell the world that “agents are now the primary interface for enterprise work“. The focus throughout Ignite was about evolution from chat-bots to multi-discipline teams…

-

What is Work IQ?

Read more: What is Work IQ?Microsoft Ignite 2025 focus this year saw Microsoft fully committed to Agentic AI as the next platform layer. Across all of the briefings, keynotes, technical sessions, and partner announcements, Microsoft repeatedly…

-

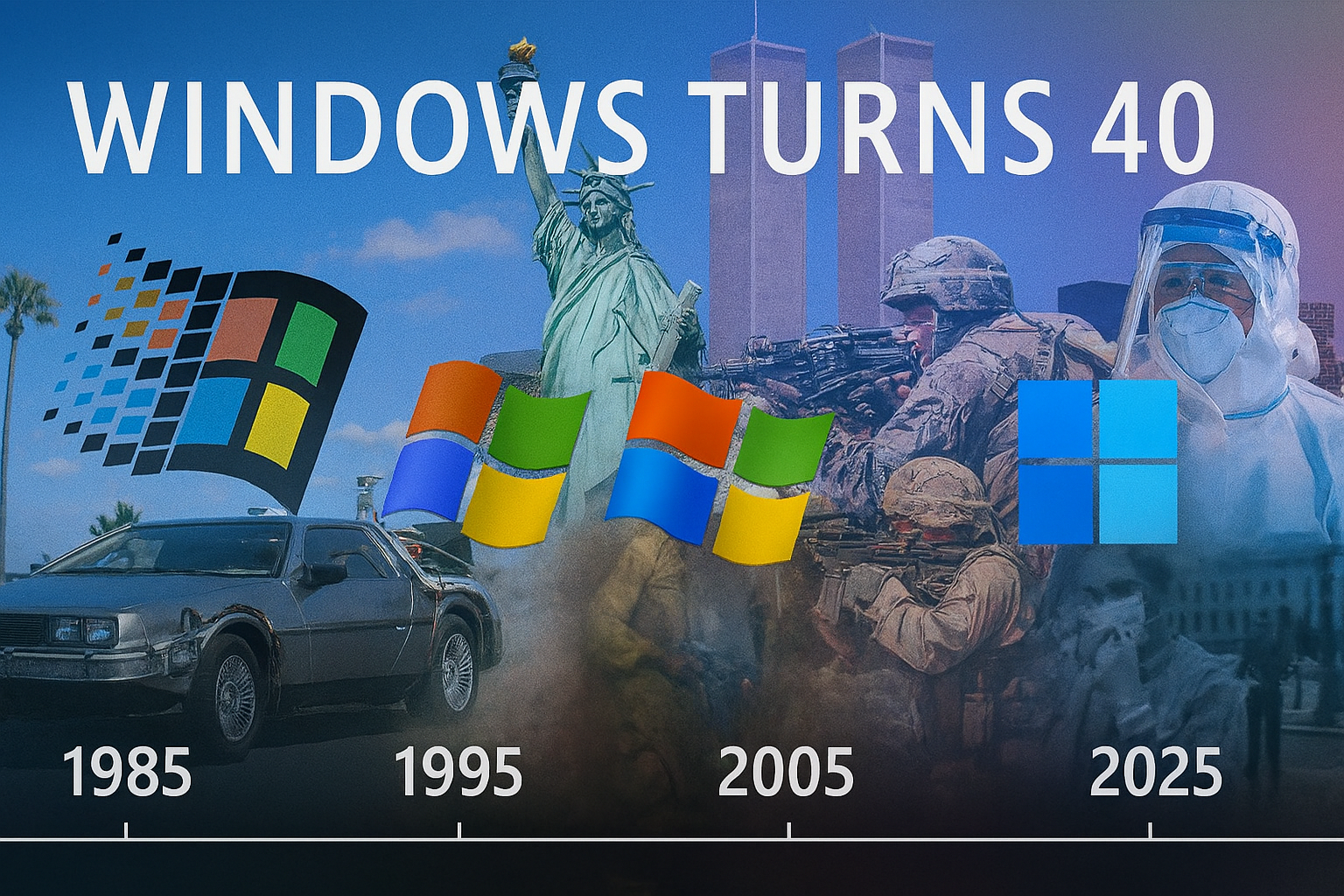

Windows at 40: Milestones that changed computing for ever

Read more: Windows at 40: Milestones that changed computing for everIt was Forty years ago (now that makes me feel. Old) that Microsoft launched Windows 1.0. This was a graphical shell that was layered over MS-DOS. Whilst it was clunky,…

-

With Security Copilot now part of Microsoft 365 E5 – what do you actually get?

Read more: With Security Copilot now part of Microsoft 365 E5 – what do you actually get?At Ignite this week, Microsoft announced that Security Copilot will now be included in Microsoft 365 E5 (and E5 Security) at no additional cost. Security Copilot delivers “AI-powered, integrated, cost-effective,…

-

Sora-2 now in Microsoft 365 Copilot

Read more: Sora-2 now in Microsoft 365 CopilotAt Ignite 2025 this month, amongst a long list of AI and Security updates, Microsoft announced that OpenAI’s Sora 2 text-to-video model is now integrated into Microsoft 365 Copilot in…

-

Microsoft 365 Copilot for small and medium businesses

Read more: Microsoft 365 Copilot for small and medium businessesMicrosoft has just announced a much more affordable aka cheaper (but fully featured license) for small and medium businesses. From December 1st, Microsoft 365 Copilot Business becomes available to organisations…

-

Windows 365: What, Where and Why?

Read more: Windows 365: What, Where and Why?As Windows 365 settles well into its forth year, there have been huge advancements in capability, connection methods, endpoint innovation, and licensing options – with even more expected as Microsoft…

-

AI Explained: 9 Key Concepts You Need to Know in 2025

Read more: AI Explained: 9 Key Concepts You Need to Know in 2025Artificial intelligence, whilst a phrase used in most of our daily lives, can feel huge, strange, unknown, scary, exciting and sometimes even intimidating. In this post I decided I would…

-

Cisco 360 Partner Program: Driving Partner and Customer Value in in the AI Era

Read more: Cisco 360 Partner Program: Driving Partner and Customer Value in in the AI EraThis blog summarises the key updates from Cisco Partner Summit 2025 regarding the Cisco 360 Partner Program and its impact on partner profitability, customer value, cross-selling, AI specialisations, and new…

-

Cisco Partner Summit 2025: The Infrastructure Behind the Digital and AI Era

Read more: Cisco Partner Summit 2025: The Infrastructure Behind the Digital and AI EraLast night I tuned into aspects of the Global Cisco Partner Summit on demand, (the live event taking part in San Diago this week). Day one messaging to partners was…

-

Copilot Researcher Agent gets “Computer Use”

Read more: Copilot Researcher Agent gets “Computer Use”Yes, the Copilot Researcher Agent, once focused purely on gathering and summarising information, can now take action on your device through a capability called Computer Use. This provides secure interaction…

-

No Agenda? No Excuse. Copilot can now help with agenda creation.

Read more: No Agenda? No Excuse. Copilot can now help with agenda creation.Let’s talk about one of my biggest pet peeves: the agenda-less meeting invite. You know the type. A calendar ping lands in your inbox with a vague title like “Catch-up”…

-

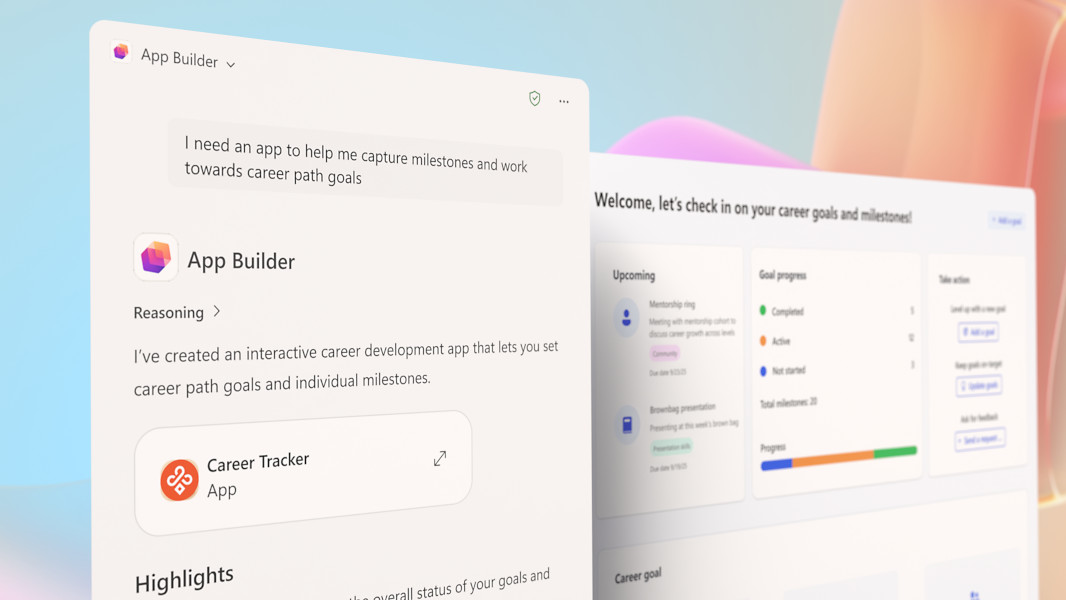

Microsoft “App Builder” & “Workflow” Agents

Read more: Microsoft “App Builder” & “Workflow” AgentsMicrosoft is expanding its Copilot Frontier Programme with two powerful new agents. These are the App Builder and Workflows Agents. These put “app creation” and automation directly into the hands…

-

Cyber Resilience – the new Trust Currency

Read more: Cyber Resilience – the new Trust CurrencyTL;DR – UK Cyber Threats Surge The UK’s National Cyber Security Centre (NCSC) has published its annual threat review, and the numbers are staggering but not surprising as we have…

-

Teams Mode for Microsoft 365 Copilot is here.

Read more: Teams Mode for Microsoft 365 Copilot is here.Microsoft Teams continues to evolve at a rapid pace. Known as “Teams Mode for Microsoft 365 Copilot” this essentially means Copilot is coming to Teams Group Chats as a participant…

-

Microsoft introduces Mico – Copilot’s new face and voice

Read more: Microsoft introduces Mico – Copilot’s new face and voiceIn a live YouTube stream on 24th October, Microsoft unveiled a wave of new consumer features for Copilot (dubbed fall update) – headlined by the official debut of Mico, a…

-

Cisco and Microsoft top 2025 UC Gartner Magic Quadrant

Read more: Cisco and Microsoft top 2025 UC Gartner Magic QuadrantTL:DR As a Cisco and Microsoft leading partner, it’s great to see that, yet again, both Microsoft and Cisco remain Leaders in the Gartner Magic Quadrant for collaboration platforms with…

-

MAKING EVERY WINDOWS PC AN AI PC

Read more: MAKING EVERY WINDOWS PC AN AI PCOn October 14th 2025, Windows 10 officially reached end of support. If you still have a PC/Laptop running Windows 10, it will not suddenly stop working – but unless you…

-

IDC – Cisco lead in Enterprise Wireless LAN technology.

Read more: IDC – Cisco lead in Enterprise Wireless LAN technology.Cisco has again been recognised as a Leader in the IDC MarketScape for Enterprise Wireless LAN – with Cisco “helping organisations rise to this moment by delivering smarter, more secure…

-

Windows 10 End of Support – What it means for Office Apps

Read more: Windows 10 End of Support – What it means for Office AppsAs of yesterday, 14 October 2025, Windows 10 has officially reached its end of support. Of course, Windows 10 will not just stop working, but after yesterdays monthly security updates,…

-

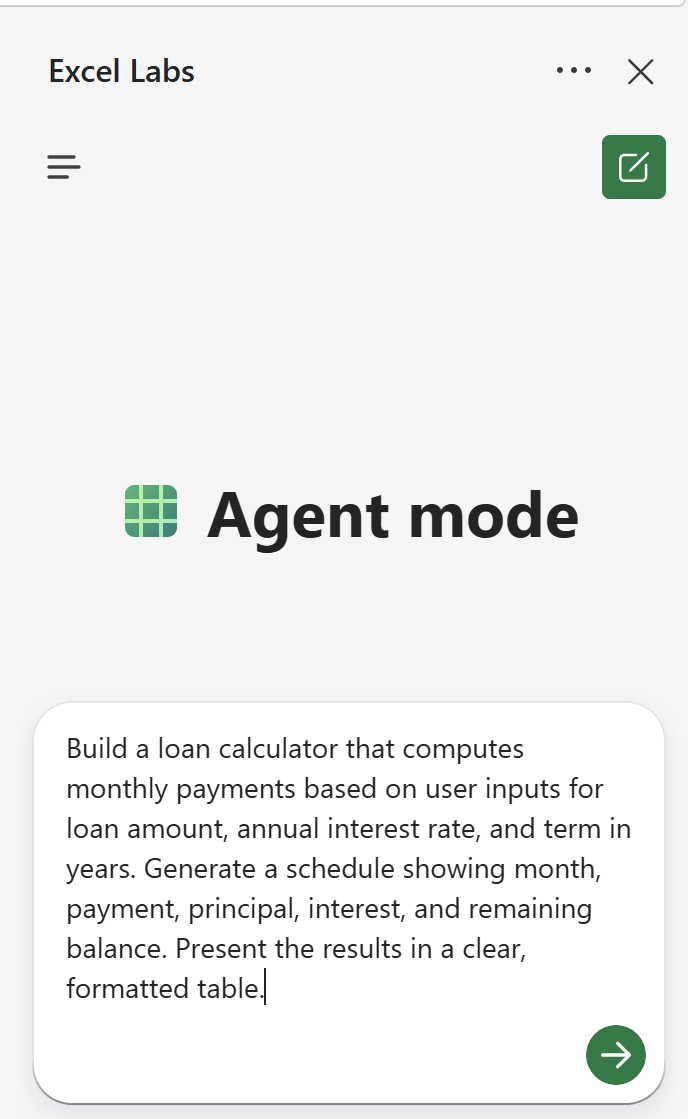

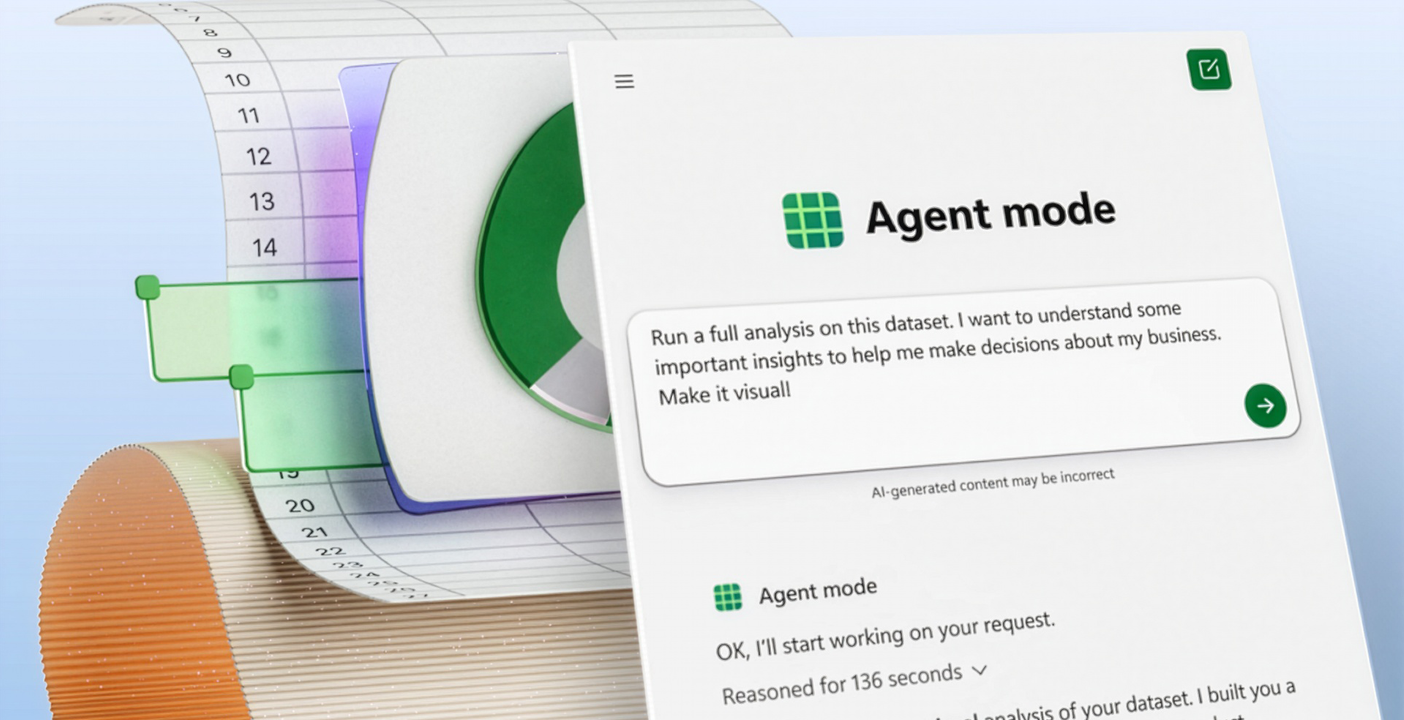

Excel’s new “Agent Mode”

Read more: Excel’s new “Agent Mode”Agent Mode in Excel is a new “preview” feature Excel (online) for Microsoft Copilot Subscribers (Microsoft 365 Commercial, Personal, Family and Premium) that enables users to build and edit workbooks along side…

-

What’s new in OneDrive + Copilot?…. Lots.

Read more: What’s new in OneDrive + Copilot?…. Lots.This week was Microsoft’s third annual OneDrive digital event (October 8th, 2025), where their key message was that OneDrive is much much more than “just” file storage. Whether you are…

-

What is ‘Bring Your Own Copilot’ to Work (BYO-Copilot) ?

Read more: What is ‘Bring Your Own Copilot’ to Work (BYO-Copilot) ?AI tools being used in the workplace is no longer a question of if but how and what. The question is what if you could allow this in a safe…

-

It’s Agent Mode the new way to do human-agent collaboration?

Read more: It’s Agent Mode the new way to do human-agent collaboration?Microsoft Copilot development just doesn’t sleep… This time they have just announced “agent mode” which they claim could be game changer in the way we (humans) work with AI agents…

-

Cisco Webex One 2025 – Chatbots to Agents

Read more: Cisco Webex One 2025 – Chatbots to AgentsWarching this year from the sofa, #Cisco #WebexOne showcased just how far their open ecosystem, agentic AI and plarform integrarion has come. This is my short reflection from the Webex…

-

What is the SharePoint Knowledge and what does it do?

Read more: What is the SharePoint Knowledge and what does it do?The responses and workflows you get back from AI is only as good as the content it can reason over or leverage. If your content (data) is not in good…

-

Beyond OpenAI: Microsoft Copilot add Claude support

Read more: Beyond OpenAI: Microsoft Copilot add Claude supportMicrosoft has started to broaden their AI horizons by adding their first (not Open AI) model into Copilot. Microsoft are integrating Anthropic’s Claude models into Microsoft 365 Copilot which marks…

-

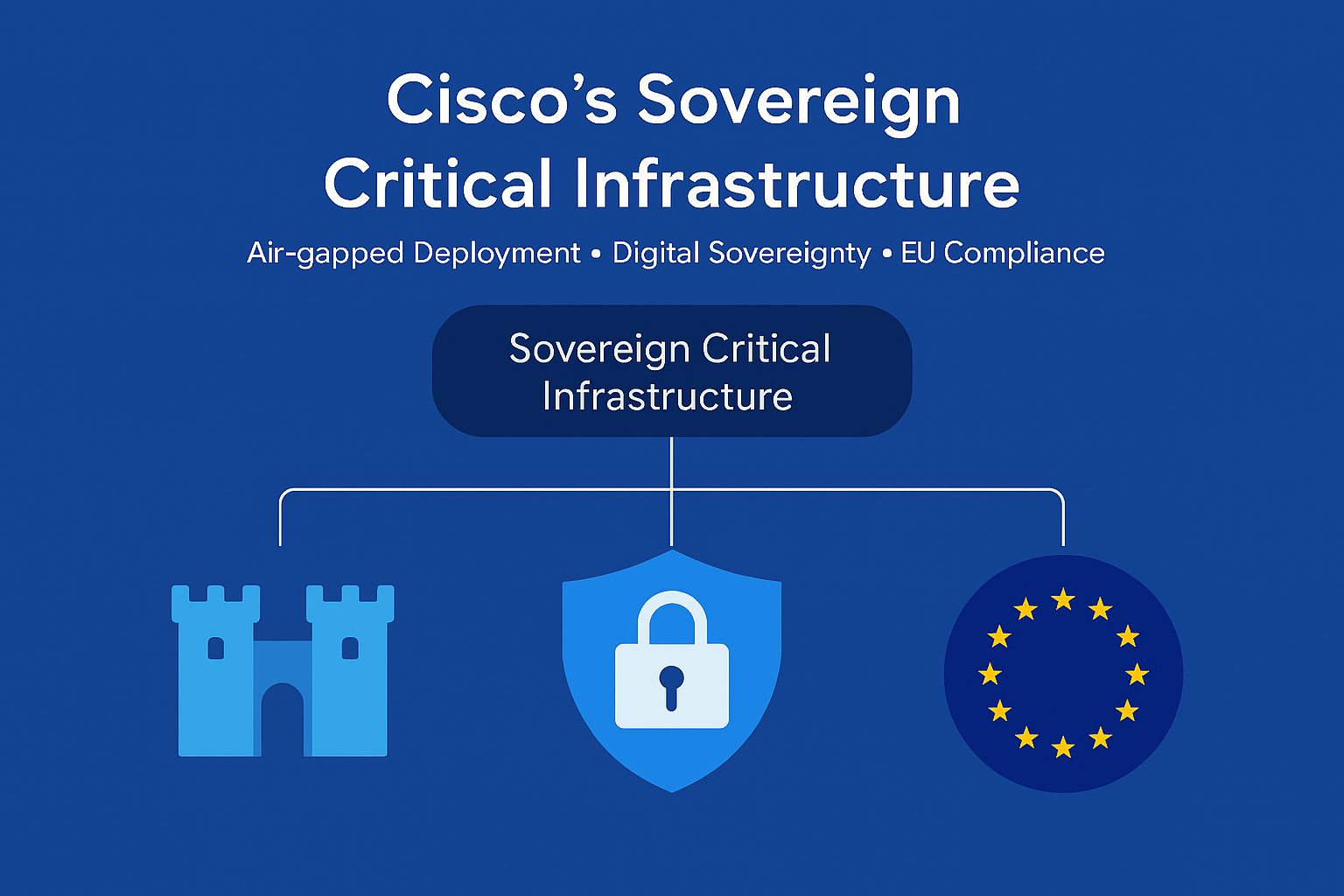

What is Cisco’s Sovereign Critical Infrastructure?

Read more: What is Cisco’s Sovereign Critical Infrastructure?Cisco yesterday announced what they referred to as a “significant milestone” in Europe’s journey toward digital sovereignty. Their Sovereign Critical Infrastructure portfolio is a comprehensive, air-gapped solution designed to give…

-

Microsoft Power Platform Licensing Guide

Read more: Microsoft Power Platform Licensing GuideMicrosoft have made their monthly-updated Power Platform Licensing Deck publicly available. Now, you might think this is not worthy of a blog post, but for many organisations, this information has…

-

Teams in Microsoft 365 is back for good (but it’s your choice)

Read more: Teams in Microsoft 365 is back for good (but it’s your choice)It’s back! Starting November 1, 2025, Microsoft Teams is officially “back” in the Microsoft 365 and Office 365 Enterprise suites globally, but the choice to have it not sit with…

-

Microsoft Copilot Consumer brings Memory Management and Google Drive Integration

Read more: Microsoft Copilot Consumer brings Memory Management and Google Drive IntegrationMicrosoft Copilot is adding with two new major updates (this time for the consumer experience) that bring it closer to the more personalised AI experience users have been asking and…

-

Copilot Chat comes to Enterprise Office Apps for all

Read more: Copilot Chat comes to Enterprise Office Apps for allMicrosoft is in the process of Copilotizing Business / Enterprise versions of your Office apps (Word, Excel, PowerPoint, Outlook, and OneNote) even for users that don’t have a Microsoft 365…

-

Microsoft simplifies Copilot add-ons with reduced pricing

Read more: Microsoft simplifies Copilot add-ons with reduced pricingMicrosoft has confirmed in a blog that they are streamlining its Copilot add-on portfolio by incoprating their specific role-based solutions into the core Microsoft 365 Copilot subscription. Starting mid-October, Copilot…

-

Is Microsoft about to kill DocuSign?

Read more: Is Microsoft about to kill DocuSign?Microsoft (after months of testing) is launching native eSignature support in Microsoft Word. How does this compete and compare to wider known tools such as Adobe Sign. Where where does…

-

Windows 11 25H2 Release Preview: What you need to know

Read more: Windows 11 25H2 Release Preview: What you need to knowWindows 11’s annual feature update—version 25H2 (Build 26200.5074)—is now available in the Release Preview Channel. This signals that the update is nearly finalised and ready for broader deployment later this…

-

Will OpenAI’s “gpt-realtime” set a new benchmark for AI Voice?

Read more: Will OpenAI’s “gpt-realtime” set a new benchmark for AI Voice?OpenAI has introduced gpt-realtime, a new cutting-edge speech-to-speech model, alongside the general availability of its Realtime API. This release marks a significant step forward in the evolution of voice AI, particularly for…