Our social media feeds will be full of predictions for the year ahead this week, after all, 2023 was an exciting and crazy year in tech with arguably some of the biggest advances we have seen for more than a decade. You can read my 2023 tech review here.

With all the advancements in Generative AI technology and chatbots in 2023, I have focussed my tech predications specifically around the rise and development of Generative AI, since every aspect of IT is going to be “AI infused” this year I believe, and organisations start to enter the next level of adoption maturity – from “what is coming” and “what might be possible” to real business impacts and tangible examples.

#1 AI is going to keep getting better and more “intelligent”.

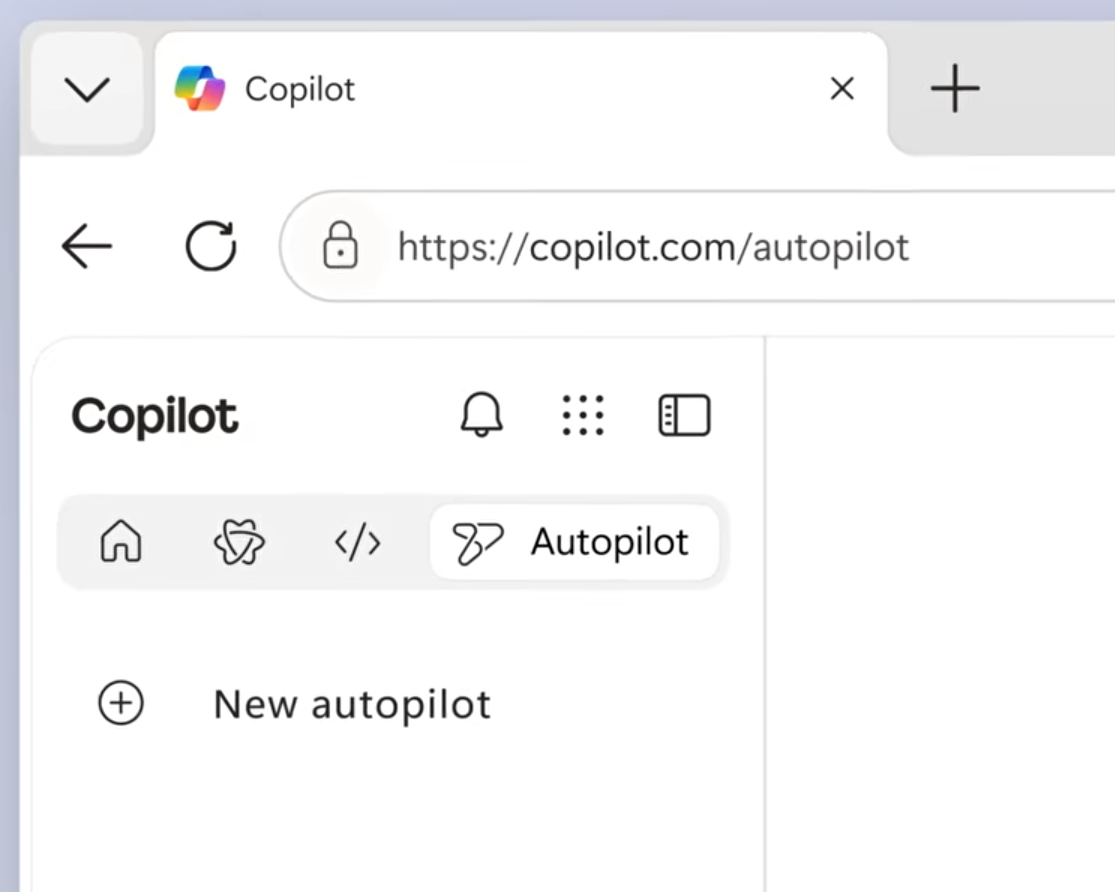

This is quite a no-brainer really, as we already know that OpenAI has big plans for 2024 and with Google hot on their tail with Gemini, I would expect to see the release ChatGPT 4.5 (or even 5) at some point in the first half of 2024. We could also see image technology like DALL-E shift into video creation for the masses an not just images. There will also be more competition to win the Gen AI race from Microsoft, Apple, Google and Amazon. This could be the new browser and search engine wars. Microsoft will adopt the later ChatGPT and DALLE-3 tools into their Copilot products.

#2 Business will invest more AI and core technology training.

Outside of using Generative AI to help us write emails and documents, many organisations will be looking to AI to further enhance business automation and data processes to complement and enhance human capabilities.

With the output of most of the AI tools we will use in the enterprise being reliant on the data on which they use as a reference point or to operate, there will be a need to invest in skills around the fundamentals of AI and big data analytics. People will need to learn how to interface with AI, how to write to good prompts that deliver the right outcome and how to leverage these new tools to radially improve productivity and outcomes.

At the more basic levels, there will also be a focus and need to drive good adoption of the base technologies used within organisations as a result of the technologies and processes put in place. From good data labelling and classification, to simply working with and storing files in the right places in Office 365 and to using the new tools such as Copilot in Edge and Microsoft 365, Intelligent Recap in Teams, businesses will need to revisit the level of IT training given to employees, encouraging Centres of Excellence and building technology sponsors or mentors across different teams.

Training users on what tools to use, how to use them and when will be key and is something many organisations still do badly.

#3 We will see more Legal Claims against AI.

Whatever happens in terms of the tech advances of AI, there is no doubt that we see a leap in the number of legal claims from authors, publishers and artists against companies who have been building AI products – after all, we’ve already seen a few in 2023.

The reason for this, is that at the heart of any Generative AI products are large language models (LLMs). The leading AI companies such as Google, Microsoft and OpenAI, have worked really hard to ensure their models adhere to and respect copyright laws while “training” their models. In fact, Microsoft are so bold about this, they even put in place a copyright protect pledge to protect companies back in September last year.

Just last week (December 2023), the New York Times filed a huge lawsuit against Open AI and Microsoft for copyright infringement. They claim that their heavily journalism content was being used to train and develop ChatGPT without any form of payment.

OpenAI and Microsoft are also caught up in another lawsuit over the alleged unauthorised use of code in their AI tool Github Copilot and there have already been other examples of lawsuits against developers of generative AI products including Stability AI and Midjourney in which artists have accused the developers of using their content to train text-to-image and image creation generators on copyrighted artwork.

The legal battles of 2023 highlight some of the complex and evolving issues surrounding intellectual property rights with the development and use of AI.

As 2024 gets underway, I suspect we will see more examples (especially if the New York Times case is successful).

#4 The rise of robust governance policies.

As we move from proof of concepts and idealisation to real proven examples of how these AI tools can be used in our daily lives, I think we will see an increase in regional, state and local companies, putting in place robust governance policies, processes and tools including the testing and validation for content generated by AI generated content. This will require new tools for ensuring there are appropriate guard rails and monitoring throughout.

Organisations will need to have clear AI policies in place that map out what AI products and tools they allow, guidance around content and image generation as well as what they view as ethical, responsible, and inclusive use of AI, outside of the policies that the AI companies have in place and the guidance they provide.

Education will also be key to ensure that employees can learn and put to practice, the necessary skills to use AI tools in workplace and to ensure the above checks and policies are implemented. Creating centres of excellence and good practice sharing will also be key to ensure employees and organisations get maximum benefit and gains from using AI.

#5 Expect to see more deception, scams and deep fakes.

We will likely see more deception and trickery for financial gain this year as fake person generators and deep fake voice and videos become more of a widespread tool for phishing and scams. We have already seen cases (and warnings) by banks where voice cloning technologies can already accurately replicate human voices and threaten the security of voice print based security systems. In 2024,we are likely to see this go further to many more areas across personal, corporate and political exploitation and deception.

Left unsupervised and unprotected, the rapid growth and risks of digital deception imposes a huge risk and needs security response and protection organisations to respond. I think we will see more guidance, more safeguards, specialised detection tools, increased awareness and increased use of multi-factor protection. A new method of digital prints to detect such fakes is going to be critical if people and organisations are going to remain confident that these technologies can’t be beaten by deep fakes.

To protect the reliability of information in a fast-changing digital world, it will be essential to have the tools and skills to detect and counteract AI-generated fabrications.

#6 Proliferation of “new” wearable AI technology.

I expect to see a huge increase in products and services around AI wearables or AI-powered wearables. This will further drive the already increasing trend that shifts away from traditional screen-focused devices towards more integrated, context and environment aware devices that provide up-to-date monitoring that fuel data driven insights and decisions into personal and professional lives.

Applications: This could open up huge advances in for example continuous health monitoring devices, such as blood glucose monitors, anxiety detection, cancer scanning, gut health and even AI controlled insulin pumps. In sports we could a new level of performance monitoring and tracking with huge sponsorships deals by leading health and fitness companies. This will/could also lead to more data for unique advertising revenues…

Apple have also recently said they are working with OpenAI and plan to leverage the computing edge (their devices) by directly enabling AI processes on their devices rather than relying solely on cloud connected AI services.

Security comprises and wider privacy concerns are likely to be impacted by this shift especially as these devices (in a similar way to health trackers do today) will have the ability to record and process huge amounts of personal, health and other data. In the case of smart glasses for example, this could also lead to new laws and legislation (and restrictions) to ensure privacy isn’t compromised by recording or capturing video without permission or consent.

#7 Cyber attacks and defence will become “more AI driven”.

With any new technology – security plays a vital role. I think we will see a massive change to the level of attacks and therefore the protection and detection needed from cyber security systems this year. From an attacker perspective, it is likely that the use of Machine Learning and AI will continue to amplify the sophistication and effectiveness of cyber attacks – with more convincing personalised-driven tactics, including advanced deepfakes and intricate, personal phishing schemes, using AI to craft more convincing social engineering attacks that make it increasingly difficult to differentiate between legitimate and deceptive communications – both externally and from within the organisation. We will also see systems customise attacks based on industry, location and known threat protection landscape.

From a defence perspective, the fight against AI attacks will also be AI-centric with new AI-based detection tools and applications that work in real time. Identity will be the primary defence and attack vector. For example, Microsoft’s Security Copilot which is currently in preview promises to be the first generative AI security product to help businesses protect and defend their digital estate at AI speed and scale. These tools, in partnership with people powered response and remediation teams should at least even the fight between the AI powered attackers and the defenders that are needed to keep our businesses, industry and services safe.

Without playing the War Games/Terminator scare games, the treat of bad actors/nation state attackers. organised cyber crime division and opportunity hackers have a new set of tools available to help them. The battle between attackers and the biggest Cyber Security MSPs, Cloud giants and business is going to heat up. We will see victims and we will see scares. The battle against cyber threats is becoming ever more complex and intertwined with AI.

Businesses will need a more nuanced and advanced approach to cybersecurity which will mean simplification, standardisation and most likely reducing the number of different disconnected security products they have and adopting a more defence in depth approach with AI powered SEIM tools or full outsourced Managed Security.

#8 Zero Trust will finally be taken seriously.

To wrap it up – and with the growth of AI in to every part of our personal and work lives, working across more devices, applications, and services, the realm of control that IT traditionally had over the environment will continue to move outside of their control.

With the rise of AI and more importantly AI being used to drive more sophisticated attacks – compromising personal devices that are used to access corporate data, I think we will see more organisation adopting the zero-trust security models whilst consolidating their point product solutions into a more streamlined and unified approach.

Zero Trust is a security strategy – not a product or a service, but an approach in designing and implementing the following set of security principles regardless of what technology products or services an organisation uses:

- Verify explicitly.

- Use least privilege access.

- Assume breach.

The core principle of Zero Trust is that nothing inside or outside the corporate firewall can be trusted. Instead of assuming safety, the Zero Trust model treats every request as if it came from an unsecured network and verifies it accordingly. The motto of Zero Trust is “never trust, always verify.”

We also know many organisation have a huge amount of digital dept when it comes to security – with lots of point products, duplicate products and dis-jointed systems. I think we will see organisations focus more around:

- Closing the gaps in the Zero Trust strategy– making sure they have adequate protection against each of the layers

- Focus on data protection to minimise data breach risk – things like Data Loss Prevention, encryption, conditional access, labelling and data classification etc.

- Doing more with less – by removing redundant or duplicate products and aligning with tools that better integrate with one another and that can be managed holistically through a single pane of glass.

- Doubling down on Identity and Access control – moving to passwordless authentication methods, tighter role based access control, time-based access for privileged roles and stricter conditional access policies.

I also think that Generative AI has a huge potential to strengthen both our awareness of data security, and in adding an additional layer of visibility and protection. I expect we will see admin tools become smarter at looking at information over sharing, pockets of risk and potential compromise and having the ability to take action (expect more premium SKUs) to close the gaps, inform information owners or alert Sec Ops teams. I think we will see organisations spend more time looking at risk management and insider risk too.

I could probably go on – as there is so much happening and the pace we saw in 2023 will only continue and if not increase.

Conclusion

In conclusion, this article has discussed some of the major trends and my predictions for AI in 2024, based on the developments, achievements, rumours and general trajectory seen last year.

In short, my predictions, include the improvement and competition of generative AI models, the need for more AI and data skills training, the legal and ethical challenges of AI-generated content, the rise of AI governance and security policies, the increase of deception and deepfakes, the proliferation of AI wearables, and the role of AI in cyberattacks and defence.

These trends highlight the both the opportunities and risks of AI for personal, professional, and societal domains, and the importance of being aware and prepared for the impact of AI in the near future.

Leave a Reply